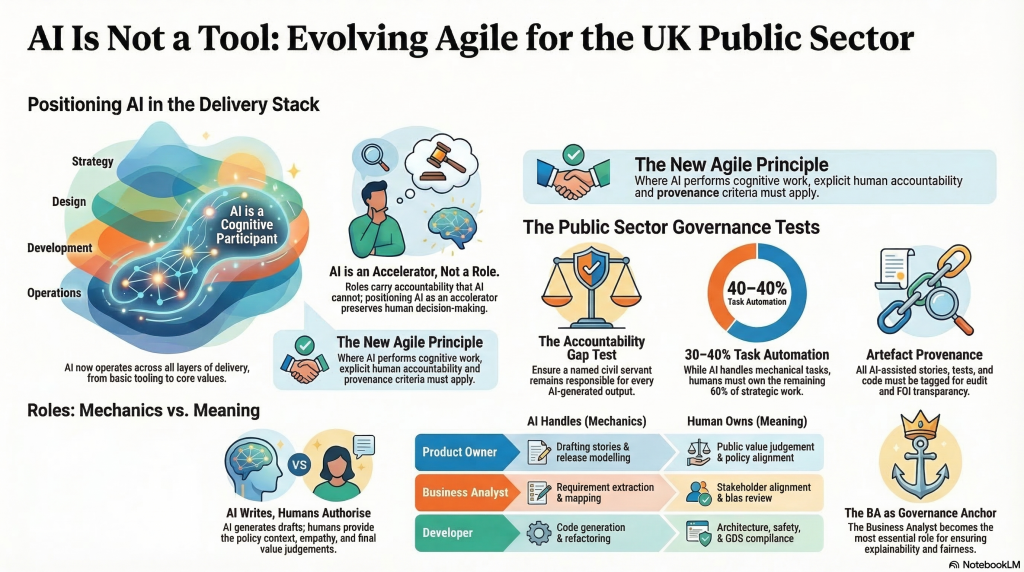

How AI reshapes roles, ceremonies, governance, and the very philosophy of Agile

| BODY OF KNOWLEDGE · DISCUSSION PAPER · 2025 AI Positioning in Agile: A Critical Framework Methodology · Framework · Practice · Tooling Agile · Scrum · SAFe · LeSS · Kanban · XP · Waterfall · Iterative Tool · Role · Accelerator — A Definitional Analysis |

| “Agile is a methodology. It says nothing about tools deliberately. But AI is not just a tool. It is a cognitive participant. And that changes everything.” Central thesis of this paper |

| 01 SECTION | The Fundamental Question: Where Does AI Sit in the Methodology Stack? |

Every delivery methodology — Agile, Waterfall, PRINCE2, Lean, Iterative operates across at least four distinct layers: the values and philosophy layer (what we believe about delivery), the framework and implementation layer (how we structure roles and ceremonies), the practice and execution layer (what we actually do sprint-to-sprint), and the tooling layer (what software we use to do it). Each layer has historically been largely independent. Agile’s genius was to define a powerful values layer and leave the lower layers deliberately open.

AI disrupts this clean separation. It does not sit neatly in any one layer. A large language model used to transcribe a standup operates at the tooling layer. The same model, when it synthesises requirements from ambiguous interview notes and proposes an architectural approach, is operating at the practice layer. When it consistently makes decisions that shape what gets built and in what order, it starts to operate at the framework layer. And when its presence fundamentally alters what “self-organising team” means, it touches the values layer.

| THE DIAGNOSTIC QUESTION The right question is not “is AI a tool?” it is: at which layer(s) does AI’s impact become significant enough to require explicit acknowledgement in the methodology, framework, or practice? The answer varies by AI capability level, team maturity, and deployment context. This paper proposes criteria for making that determination systematically. |

The Four-Layer Stack

| 01 | Values / Philosophy | Agile Manifesto · Lean principles · Iterative philosophy tools agnostic by design | AI impact: Low but growing |

| 02 | Framework / Implementation | Scrum · SAFe · LeSS · Kanban · XP · DAD · Spotify · ScrumBan | AI impact: Medium — role changes |

| 03 | Practice / Execution | Ceremonies, artefacts, team behaviours, delivery cadence | AI impact: High — ceremony disruption |

| 04 | Tooling | Jira · GitHub Copilot · Otter.ai · Spinach.ai · Claude · Cursor · Testim AI | AI impact: Transformative |

| 02 SECTION | The Three Positioning Options — Critique and Verdict |

When asked “what is AI to Agile?”, three answers compete. Each is partially correct. Each is also insufficient alone. Understanding why matters for deciding how to formally position AI in any delivery context.

| A: AI as Tool | B: AI as Role / Actor | C: AI as Accelerator |

| AI is just another piece of software like Jira or Miro. Teams choose to use it or not. Agile’s tools-agnostic stance covers it. | AI is a named participant in the framework a “cybernetic teammate,” a new Scrum role, a virtual team member. | AI is a capability layer it amplifies what existing roles can do without replacing them. Accountability stays with the human. |

| Why this fails: A tool does not make cognitive decisions. AI does. Treating AI as a tool leaves teams without governance at the moment they most need it. | Why this fails: Roles carry accountability. AI cannot be held accountable, coached, or removed. Naming AI as a role creates a dangerous accountability vacuum. | Why this works: Preserves human accountability. Compatible with all frameworks. Provides a natural home for governance requirements. Honest about what AI actually does. |

| Verdict: Partially valid at tooling layer only. Insufficient at practice layer and above. | Verdict: Conceptually attractive but creates accountability gaps. Reject as formal positioning. | Verdict: Recommended positioning. Requires role-level AI participation criteria to operationalise. |

| 03 SECTION | Agile’s Tools-Agnostic Stance and Why AI Breaks It |

The Agile Manifesto explicitly avoids prescribing tools. This is one of its greatest strengths it decouples the philosophy from the technology of any given era. In 2001, this meant not specifying whether you should use Excel or a dedicated project tool. In 2025, it means not specifying AI tools.

But the tools-agnostic principle was designed for tools that are passive that do what they are told, produce deterministic outputs, and do not participate in the cognitive work of delivery. AI fails all three of these conditions. AI produces non-deterministic outputs. It participates in cognitive work. And in some configurations when it is generating requirements, sizing stories, and proposing architectural patterns — it is not being “told what to do” in any meaningful sense.

| THE AGNOSTIC STANCE FAILURE MODE If Agile remains silent on AI, teams will adopt AI ad hoc, without governance, without shared criteria for when AI participation in a ceremony is appropriate, and without accountability structures for AI-assisted artefacts. This is already happening. The tools-agnostic stance needs a companion principle: “Where AI participates in cognitive work, explicit accountability and governance criteria apply, regardless of the tool used.” This is not a call to rewrite the Manifesto. It is a call to extend it to acknowledge that a category of “tool” now exists that crosses the line between passive instrument and cognitive participant. |

| 04 SECTION | Framework-Level Critique: Scrum, SAFe, LeSS, Kanban, XP and Variants |

Different frameworks approach AI readiness very differently. The table below critiques each major framework and variant against three criteria: role adaptability, ceremony adaptability, and governance readiness.

| Framework | Role adaptability | Ceremony adaptability | Governance readiness | AI positioning critique |

| Scrum | Medium | Medium | Low | Three roles can absorb AI augmentation — but the Scrum Guide is silent on AI. Story point estimation and DoD become problematic when AI generates the artefacts being reviewed. |

| SAFe | Good | Good | Emerging | SAFe has moved furthest in acknowledging AI. Risk: framework-specific AI content diverges from other frameworks, creating a fragmented skills landscape. |

| LeSS | Adequate | Adequate | Minimal | LeSS’s minimalism adapts well structurally, but its explicit rejection of tooling prescription means it offers no AI governance guidance whatsoever. |

| Kanban | Good | Good | Medium | Flow-based, role-light model adapts well. AI anomaly detection in flow metrics is a natural fit. WIP limit optimisation via AI is directly Kanban-aligned. |

| XP | Good | Good | Moderate | Engineering practices (TDD, pair programming, CI) map most naturally to AI augmentation of any framework. AI-assisted TDD and AI pair programming are XP-compatible. |

| ScrumBan | Adequate | Adequate | Low | Inherits Scrum’s ceremony risk and Kanban’s flow strength. No governance scaffolding of its own. |

| Spotify Model | Good | Fragmented | None | Tribe/squad/chapter/guild structure offers interesting AI possibilities — AI-as-capability within a chapter. But Spotify is not a framework and governance is entirely team-defined. |

| DAD | Good | Good | Moderate | DAD’s process-goal-based, context-sensitive approach is most philosophically aligned with AI augmentation. Under-adopted but conceptually strongest. |

| Waterfall / Traditional | Moderate | Moderate | Strong | Gate-based, documentation-heavy model has stronger governance scaffolding for AI outputs. Every artefact is formally reviewed and signed off. Risk: AI speed overwhelms gate-review cadence. |

| Iterative (DSDM, RUP) | Good | Good | Moderate | Phase-based structure with formal artefact management adapts well. MoSCoW prioritisation pairs naturally with AI-assisted backlog scoring. |

| 05 SECTION | The Criteria for AI Acknowledgement Across Methodologies |

Before any team, framework, or organisation decides how to position AI in their delivery methodology, they need a set of testable criteria that determine whether AI’s involvement in a given activity requires explicit acknowledgement, governance, or framework-level treatment. The following six-criterion model provides that test.

| 01 Cognitive participation test → Does AI make or significantly influence a decision? → Does AI generate content entering a formal artefact? → Does AI synthesise inputs shaping what gets built? → If yes to any → explicit acknowledgement required | 02 Accountability gap test → If AI output is wrong, who is accountable? → Is there a named human who reviewed the AI output? → Is AI-generated content traceable to a human decision? → If no clear accountable human → governance required |

| 03 Role displacement test → Is AI performing core role responsibility tasks? → Has the role’s time allocation shifted due to AI? → Would the role need redefining to include AI skills? → If yes → role definition update required | 04 Ceremony integrity test → Does AI participation change the ceremony’s purpose? → Does AI pre-processing reduce collaborative value? → Is AI output presented as team output in reviews? → If yes → ceremony-level AI rules required |

| 05 Artefact provenance test → Can stakeholders tell AI-generated vs human artefacts? → Are AI-assisted artefacts tagged in the backlog? → Is prompt history retained for governance purposes? → If no → provenance and traceability policy required | 06 Velocity distortion test → Is AI inflating velocity without value delivery? → Are story points and cycle time still meaningful? → Is estimation calibrated for AI-assisted work? → If yes → metrics recalibration required |

| APPLYING THE CRITERIA: A WORKED EXAMPLE Scenario: A BA uses Claude to generate user stories from workshop notes, which are then dropped directly into the sprint backlog. Test 1 (Cognitive participation): Yes — AI generated content entering a formal artefact. Acknowledgement required. Test 2 (Accountability gap): Only if the BA reviewed and approved. If stories went in unreviewed, governance gap exists. Test 5 (Artefact provenance): Are the stories tagged as AI-assisted? If not, provenance policy needed. Test 6 (Velocity distortion): Are these stories estimated the same as human-authored stories? If so, velocity is being artificially inflated — recalibration needed. |

| 06 SECTION | Role-Based Impact Analysis — What Changes Per Role |

Across all Agile frameworks, the impact of AI is not uniform across roles. The table below maps the impact against three dimensions: how much the role definition changes, how much the skill requirement changes, and whether AI augmentation is primarily a tool use, a practice change, or a role evolution.

| Role | Role definition change | New skill requirement | AI classification | Key risk if unaddressed |

| Product Owner | Significant | AI prompt curation, backlog architecture, output validation | Practice change + partial role evolution | PO becomes a rubber stamp on AI-generated backlogs rather than a strategic product voice |

| Scrum Master | Moderate | AI meeting intelligence, sentiment analysis interpretation, flow prediction | Tool use + practice change | Facilitation skills atrophy; SM becomes a report distributor rather than a team coach |

| Business Analyst | Transformative | AI elicitation, requirements validation, traceability governance, AI feasibility assessment | Role evolution — largest shift of any role | BA is disintermediated if they don’t evolve; or BA’s value is hidden if AI output is attributed to “the team” |

| Developer | Significant | AI-assisted TDD, prompt engineering for code, AI code review literacy | Tool use + practice change | Velocity inflation without quality improvement; technical debt from unreviewed AI code accumulates invisibly |

| QA / Test Engineer | Moderate | AI test generation review, synthetic data governance, automated coverage analysis | Tool use — primarily | Exploratory testing skills lost; QA becomes a test runner rather than a quality architect |

| UX Designer | Moderate | AI research synthesis, generative design review, AI usability analysis interpretation | Tool use + practice change | Design thinking replaced by AI aesthetic defaults; user advocacy responsibility diffused |

| Solution Architect | Low–moderate | AI pattern review, ADR validation, AI-assisted technical debt analysis | Tool use — primarily | Architectural accountability shifts to AI without a clear owner; ADRs become AI drafts that no one truly owns |

| DevOps Engineer | Moderate | AI observability interpretation, IaC review, AI incident prediction | Tool use + practice change | Alert fatigue from AI-generated anomaly signals; human judgement in incident response erodes |

The Business Analyst case: why it matters most

Of all roles, the BA experiences the most transformative AI impact and is the most poorly served by current framework guidance. The BA role sits at the intersection of discovery, requirements, stakeholder management, and governance. These are precisely the activities where AI can generate outputs, but where human judgement, context, and accountability are irreplaceable.

Without explicit BA-level AI positioning, two failure modes emerge: either the BA is disintermediated (AI generates stories, BAs are seen as redundant) or the BA’s value is invisible (AI generates the content, the BA validates and contextualises, but that value is attributed to “the team” or “the tool”). Both outcomes damage the BA profession and delivery quality.

| 07 SECTION | Do We Need an AI-Native Agile Variant? Conditions and Risks |

The question of whether to create a named “AI-Native Agile” or “AI-Enhanced Scrum” variant is genuinely difficult. Here is the honest analysis.

The case for a new variant

AI changes enough about how Agile is practised — how stories are generated, how ceremonies run, how velocity is measured, how accountability is assigned — that a comprehensive update to Scrum or Agile frameworks may be warranted. SAFe is already moving in this direction.

The case against a new variant

Agile’s history with variants is not encouraging. ScrumBan, ScaledScrum, Enhanced Scrum, SAFe, LeSS — each was created to solve a legitimate problem and each added complexity, created certification bloat, and fragmented the practitioner community. An “AI-Native Agile” variant risks the same. Worse, AI evolves faster than any framework can be updated.

| THE CONDITIONS UNDER WHICH A VARIANT IS JUSTIFIED Condition 1: AI participation in delivery is so pervasive that more than 30% of formal artefacts have significant AI contribution. Condition 2: Existing role definitions are materially insufficient to cover AI-specific accountabilities (no role owns AI output validation). Condition 3: Governance requirements (ethics, bias, traceability) cannot be met within the existing framework’s accountability model. If all three conditions are met: a formal AI companion specification for the existing framework is warranted — not a new variant. If fewer than three are met: a team-level AI participation agreement, grounded in the Section 5 criteria, is sufficient. |

| 08 SECTION | A Universal AI Participation Standard for Delivery Methodologies |

Rather than framework-specific AI variants, what is needed is a methodology-agnostic AI Participation Standard — a set of principles and criteria that any team can adopt to govern AI’s involvement in delivery, regardless of whether they use Scrum, SAFe, Kanban, Waterfall, or a hybrid.

AI Participation Declaration Levels

| Level | Classification | Description and governance requirement |

| Level 0 | No AI | No AI involvement in artefacts or decisions. Traditional delivery only. |

| Level 1 | AI Tooling | AI used for tooling and automation only — transcription, scheduling, formatting. No cognitive participation. |

| Level 2 | AI Assisted | AI participates in artefact generation — stories, tests, documentation — with mandatory human review before use. |

| Level 3 | AI Decision Support | AI participates in decision support — prioritisation, risk assessment, architectural suggestions — with explicit human accountability on all decisions. |

| Level 4 | AI Intelligence Layer | AI fully integrated into delivery intelligence — continuous learning, predictive planning, automated retrospectives. Full governance framework required. |

Component 2: Role-level AI augmentation agreements

For each role, a documented agreement defines what AI may do on behalf of the role, what the human retains accountability for, how AI outputs are reviewed, and how AI-generated artefacts are tagged and traced. This is not a tool policy — it is a role-level governance document.

Component 3: Ceremony AI protocols

For each ceremony, a defined protocol specifies: whether AI pre-processing of inputs is allowed, how AI-generated content is attributed, what the human facilitator is accountable for, and what triggers a human-only override of AI participation.

Component 4: Artefact provenance standard

All formal delivery artefacts carry a provenance marker: Human-authored, AI-assisted (human reviewed), or AI-generated (human approved). This is non-negotiable at Level 2 and above.

Component 5: Metrics recalibration protocol

When AI augmentation is introduced, teams recalibrate velocity, estimation, and definition of done. AI-assisted stories are estimated on review and integration effort, not generation effort. Cycle time and lead time become primary flow metrics as they are less susceptible to AI distortion.

| 09 SECTION | Conclusion and Recommended Positioning |

| Conclusion and Recommended Positioning |

| ● AI is not a tool. At the practice layer and above, AI is a cognitive participant. Treating it as a passive tool leaves teams ungoverned. |

| ● AI is not a role. Roles carry accountability. AI cannot. Naming AI as a team member creates an accountability vacuum that will cost delivery quality and organisational trust. |

| ● AI is an accelerator — a capability layer that raises individual role performance toward team-level output. This framing preserves human accountability, is compatible with all frameworks, and scales with AI’s evolving capability. |

| ● Agile’s tools-agnostic stance needs a companion principle for cognitive participants: “Where AI participates in cognitive work, explicit human accountability and provenance criteria apply, regardless of the tool used.” |

| ● Framework variants are not the answer. A universal AI Participation Standard — methodology-agnostic, role-grounded, and criteria-based — is more durable than any framework-specific AI variant. |

| ● The Business Analyst is the linchpin role. BA skills in requirements intelligence, output validation, traceability, and governance are the human competencies most critical for making AI-assisted delivery trustworthy, accountable, and valuable. |

The ultimate test of any AI positioning in a delivery methodology is not whether it is theoretically elegant — it is whether a team can answer three questions clearly: Who is accountable for this AI-generated artefact? How do we know it is correct? And what happens when it is wrong?

| Sources: BA AI Body of Knowledge · Scaled Agile Framework AI documentation · XP 2025 AI & Agile Research · Scrum.org practitioner guidance · P&G “Cybernetic Teammate” study (Dell’Acqua et al. 2025) · BA Competency Framework · BA AI Maturity Assessment Tool |

Refrences :Ai Agile References Contents

AI-Augmented Agile and BA Capability Documents

| Name | Short Description |

| AI Is Not a Tool (Core Paper) | Foundational paper defining AI as a cognitive participant rather than a tool, and positioning AI as an accelerator across Agile layers. Establishes the core argument that Agile must evolve to include governance, accountability, and provenance. |

| AI Is Not a Tool (Public Sector Version) | Adaptation of the core thesis for government delivery, aligned to GDS and DDaT. Focuses on auditability, fairness, FOI, and public trust, with governance tests and role accountability. |

| AI Is Not a Tool (GDS Slide Deck) | Presentation version of the framework for public sector teams. Introduces the four-layer stack, governance tests, and role impacts in a format suitable for training and stakeholder alignment. |

| AI Positioning in Agile BoK | Analytical body of knowledge that evaluates where AI sits across methodology, framework, practice, and tooling layers. Compares positioning options (tool, role, accelerator) and provides decision criteria. |

| AI-Augmented Agile Roles and Tools Framework | Practical reference mapping Agile roles to AI capabilities, showing what AI handles versus what humans own, with associated tools and impact levels across roles. |

| AI-Augmented Agile Roles and Tools (HTML Visual Framework) | Designed, visual version of the roles and tools framework. Suitable for web publishing, dashboards, or interactive knowledge hubs. Emphasises usability and presentation. |

| Capability Pack: AI-Augmented Requirements Management | End-to-end training and governance suite including slide decks, workbook, maturity model, and frameworks like AARMF and AIRL. Designed for organisational capability uplift. |

| Guide / Playbook Model (Cognitive Delivery Playbook) | Full operating model for AI-augmented delivery. Covers governance, lifecycle, tooling, roles, and organisational processes, including Confluence and Notion implementations. |

| The Core Principle Document | Conceptual anchor stating that AI accelerates mechanics while humans own meaning. Explains how ceremonies and roles shift in practice and quantifies AI impact on delivery efficiency. |

| AI Agile Roles and Tools (Supporting Doc) | Supporting synthesis document linking BA BoK, competency frameworks, and maturity models into a unified role-impact view. Provides structured mapping for implementation. |

What this table actually gives you

If you step back, these documents form a layered system rather than separate assets:

- Philosophy layer

Core Paper, Core Principle - Methodology and governance layer

AI Positioning BoK, Public Sector Version - Execution layer

Roles and Tools Framework, HTML version - Capability and deployment layer

Capability Pack, Playbook Model - Communication layer

GDS Slide Deck