“Your digital life should belong to you in a way that is meaningful, enforceable, and technically real.”

PART I — PREAMBLE

Why This Argument Begins Where Privacy Ends

We have spent years talking about privacy as if it were the defining digital issue of our time. It is not. Privacy matters but it addresses only one dimension of a much larger, structural problem. The deeper question is control. Who controls the record of your digital life. Who can move it, delete it, or profit from it. Who can study it, reshape it, and deploy it to influence your choices, opportunities, and public voice.

For most people, the internet is no longer a peripheral utility. It is where memory lives, where relationships are maintained, where work is displayed, identity is performed, communities are built, and influence is accumulated. Posts, comments, subscriptions, follows, reactions, playlists, uploads, searches, and message trails have become woven into the fabric of social and economic reality. Yet the structures that hold this reality are mostly private, closed, and designed to favour platform power over user agency.

That is the condition this body of work sets out to change. And the principle it builds from is deceptively simple: your digital life is yours. Not metaphorically. Not by default within the limits of a terms-of-service agreement. Actually, legally, and technically yours with enforceable rights, real portability, and meaningful accountability.

The Structural Problem: Digital Feudalism

The current digital economy is often framed as a fair exchange. Platforms provide services; users receive convenience, connectivity, reach, and storage; in return, platforms process data. That framing is incomplete to the point of being misleading.

Modern platforms do not merely host user activity. They extract value from it. Every post, follow, pause, click, tag, like, watch pattern, interaction, and social connection feeds systems of monetisation, prediction, recommendation, and retention. User behaviour improves products, trains machine learning models, strengthens market position, and deepens network effects. The user is not a passive customer at the edge of the system. The user is a central source of value creation yet users rarely have meaningful visibility into what is collected, how it is structured, what inferences are drawn, where data travels, or how much it is worth.

This asymmetry of power has produced what the documents in this collection describe as digital feudalism. People build their public and private lives inside privately owned digital estates, subject to rules they did not negotiate, and then discover they cannot leave without abandoning years of identity, interaction history, and creative output. The platform does not merely have customers. It has subjects.

Why Digital Sovereignty Is the Right Frame

Traditional privacy law has done important work. But its focus on collection, processing, notice, consent, and access is not sufficient to address the depth of the problem. Privacy is a right of non-exposure. Digital sovereignty is a right of agency. It concerns not merely what others cannot do with your data, but what you can do with it yourself.

Digital sovereignty means the capacity to access the full record of your digital existence; to move it to another service without losing continuity or context; to understand how it is being used; to challenge unfair practices; and to deploy tools including AI tools that act in your interest rather than the platform’s. It treats digital data not as a byproduct of platform use, but as a form of personal property and an extension of identity.

This is the foundational argument that runs through the entire body of work assembled here. It begins with the original problem statement, moves through public advocacy, policy architecture, draft legislation, and technical implementation. Each document has a distinct role. Together they constitute a coherent and actionable framework for reform

We have spent years talking about privacy as if it were the main digital issue of our time. It is not. Privacy matters, but it is only one part of a much larger problem. The deeper issue is control. Who controls the record of your digital life. Who decides whether you can move it. Who can delete it. Who can profit from it. Who can study it, reshape it, and use it to train systems that influence your choices, opportunities, and voice.

For most people, the internet is no longer just a set of websites and apps. It is where memory lives. It is where relationships are maintained. It is where work is displayed, identity is performed, communities are built, and influence is accumulated. Our posts, comments, subscriptions, follows, reactions, playlists, uploads, searches, and message trails have become part of our social and economic reality. Yet the structures that hold this reality are mostly private, closed, and designed to favour platform power over user agency.

That is why digital sovereignty matters. Digital sovereignty is not a slogan for technologists or policymakers. It is a practical civic principle. It means that your digital life should belong to you in a way that is meaningful, enforceable, and technically real. It means you should be able to access the full record of your digital existence, move it to another service, understand how it is being used, challenge unfair practices, and deploy tools that act in your interest rather than the platform’s.

This is the argument running through the attached body of work. Taken together, these documents do more than call for reform. They offer a coherent framework that moves from public manifesto to policy paper, from draft legislation to technical implementation. They argue that digital rights must evolve beyond privacy and consent into a new architecture of ownership, portability, accountability, and public interest governance.

We do not simply use platforms, we build value inside them

The current digital economy is often presented as a fair exchange. Platforms provide services. Users get convenience, connectivity, reach, and storage. In return, platforms process data.

That description is incomplete to the point of being misleading. The modern platform economy does not merely host user activity. It extracts value from it. Every post, follow, pause, click, tag, like, watch pattern, interaction, and social connection contributes to systems of monetisation, prediction, recommendation, and retention. User behaviour improves products, trains models, strengthens market position, and deepens network effects. The user is not a passive customer at the edge of the system. The user is a central source of value creation.

Yet people rarely have meaningful visibility into what is collected, how it is structured, what inferences are drawn from it, where it travels, or how much value it generates. They are allowed limited access to fragments of their own record, often in forms that are hard to interpret and even harder to reuse. They may be able to delete a post but not erase its downstream impact. They may download an archive but not migrate their digital life in a usable way. They may leave a service, but at the cost of losing continuity, context, and community.

This is not simply a consumer inconvenience. It is a structural power imbalance. A great deal of public life now happens inside privately governed environments. That means lock-in is no longer just a market issue. It has become a civic issue. When a person cannot leave a platform without abandoning years of identity, interaction history, and creative output, the platform does not merely have customers. It has subjects.

Privacy alone is too small a frame

Traditional privacy law has done important work, but it has limits. It tends to focus on collection, processing, notice, consent, and access. Those remain necessary. They are not sufficient.

The documents make a broader claim. The challenge is not just whether platforms collect too much data. It is whether people have enforceable rights over the life they have built online. That includes not only personal data in a narrow legal sense, but also interaction history, social context, creative continuity, platform dependence, economic contribution, and the ability to delegate rights management to trusted tools.

A rights framework for the platform age must therefore address more than secrecy. It must address movement, continuity, comprehension, leverage, and institutional accountability. It must ask not only what platforms are allowed to know, but what users are allowed to do.

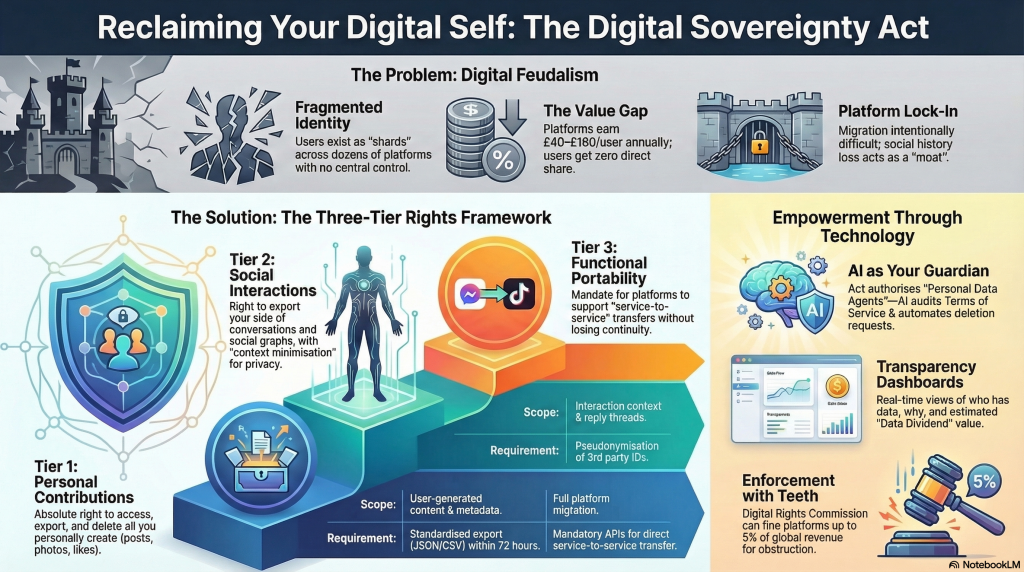

A practical framework, the three tiers of digital sovereignty

One of the strongest contributions across the documents is the three-tier model for digital rights. It replaces vague rhetoric with a structure that can guide legislation, product design, standards work, and enforcement.

Tier 1, your personal archive

The first tier covers the material you directly create or generate. This includes posts, uploads, comments, messages where appropriate, profile information, preferences, interaction logs, and associated metadata. The principle here is direct and hard to dispute. You should have a clear, non-waivable right to access, export, delete, and reuse this material in complete, machine-readable form.

This right must mean more than a symbolic download button. Exports should preserve timestamps, organisational structure, provenance, and relationship data where relevant. Formats should be usable, interoperable, and properly documented. Requests should be processed within defined timeframes. Deletion should not be treated as a vague promise. It should be evidenced and traceable.

Tier 2, your interaction context

Digital life is social. A post without replies, a playlist without usage history, a community without thread structure, a professional network without connection context, these are incomplete records. The second tier recognises that a person’s digital existence includes context built through interaction with others.

This is where many simplistic data ownership arguments break down. Not everything can be exported without regard for third-party rights. Conversation threads, group interactions, moderation records, and social graphs often involve multiple people with legitimate privacy claims.

The framework’s answer is more mature than extreme. It does not abandon context, nor does it ignore other people’s interests. Instead, it proposes contextual portability supported by minimisation, pseudonymisation, and rule-based handling of shared material. The aim is to preserve continuity and meaning while protecting the rights of others.

That balance matters. Without context, data portability becomes a hollow exercise. With no safeguards, it becomes reckless. The goal is useful continuity with lawful boundaries.

Tier 3, portability that actually works

The third tier is where the framework becomes truly transformative. It insists that portability is not complete unless data can move into another environment and remain functional there. A person should not merely be able to leave with a pile of files. They should be able to re-establish themselves elsewhere with legible history, transferable records, and as much continuity as possible.

That requires more than user rights language. It requires standards, interfaces, and obligations on services. The documents point toward structured schemas, signed portability packages, service-to-service transfer mechanisms, import support, migration receipts, and user-authorised continuity notices to followers or contacts where appropriate.

This is the difference between notional freedom and practical freedom. If leaving a platform means losing your history, your audience, your playlists, your working archive, your social traces, and your reputational continuity, then you were never truly free to leave.

AI should not only be regulated, but it should also be repurposed for the user

A striking feature of the material is that it does not treat AI only as a threat. It recognises the dangers clearly, profiling, opaque inference, manipulation, extractive training, and asymmetric power. But it also asks a more ambitious question. What would AI look like if law required it to serve the citizen rather than the platform.

That question leads to one of the most original proposals in the framework, the Personal Data Agent.

The Personal Data Agent is imagined as an AI-enabled tool operating under the user’s authority. Its job is not to maximise engagement, sell advertising, or profile vulnerability. Its job is to help a person understand and exercise their rights. It could review terms of service, explain legal and technical implications, identify conflicts with statutory protections, track data flows, automate access and deletion requests, prepare portability actions, and present compliance information in plain language.

This is a powerful shift in regulatory imagination. Much of the AI debate assumes that the citizen needs protection from intelligence systems owned by powerful actors. That remains true. But citizens may also need intelligent tools of their own. Rights become far more meaningful when people can delegate complexity to systems that are legally aligned with their interests.

Of course, this only works if strict safeguards are built in. The documents are right to stress logging, transparency, certification, clear separation between observed data and inferred insights, prohibition of covert sensitive inference, and protection against platform retaliation. Without those guarantees, a so-called agent could simply become another surveillance layer.

The lesson is important. AI governance should not stop at restrictions. It should also create lawful room for empowering tools that strengthen human agency.

Rights without implementation are only aspirations

Many policy ideas fail because they remain abstract. They sound good in principle but never become enforceable in practice. One of the reasons this body of work stands out is that it takes implementation seriously.

The Digital Sovereignty Implementation Platform, described in the BRD and SRS materials, gives institutional shape to the rights framework. It proposes an operational system that supports users, platforms, regulators, and certified agents through structured workflows, evidence bundles, dashboards, audit trails, migration services, provenance tracking, deletion proofing, and compliance reporting.

This matters because digital rights only become real when they can be verified.

A right to deletion must be accompanied by proof that deletion occurred and, where relevant, propagated through linked systems. A right to portability must be accompanied by schema validation, export completeness indicators, signed packages, and evidence that the receiving service can interpret what was sent. A right to transparency must be supported by logs, dashboards, machine-readable notices, and regulator access to auditable records.

The documents also make an intellectually honest point that should be preserved in any final framework. In the real world, data is often incomplete, fragmented, duplicated, or hard to classify. A serious system should not pretend otherwise. Instead, it should provide provenance, uncertainty labels, confidence indicators, and coverage reports so that both users and regulators understand where the record is complete and where it is not.

That is not a weakness. It is what mature governance looks like.

The political challenge is not complexity, it is resistance

The barriers to this agenda are not mainly technical. They are political and economic.

Platforms benefit from lock-in. They benefit from opacity. They benefit when leaving is painful, when rights are fragmented, when standards remain weak, and when users cannot easily understand the value of what they produce. Any serious attempt to establish digital sovereignty will therefore be met with predictable forms of resistance.

The objections will sound familiar. Interoperability will be framed as a security risk. Export and migration will be framed as privacy risks. Standardisation will be framed as anti-innovation. Platform duties will be framed as disproportionate burdens. User-facing AI tools will be framed as dangerous automation. Smaller services will warn of compliance costs. Dominant services will warn of systemic instability.

These concerns should be assessed carefully, not dismissed. But they should not be allowed to function as automatic vetoes. The documents offer sensible design responses, including scoped credentials, consent receipts, encryption, role-based access, rate limiting, receiving-platform verification, phased implementation, platform size thresholds, contextual minimisation, and regulated certification models.

Good policy does not ignore counterarguments. It anticipates them, narrows them, and prevents them from being used as permanent excuses for inaction.

The economic question cannot be ignored

One of the most ambitious elements of the framework is that it does not stop at control. It also raises the issue of value.

If users generate the content, signals, behavioural patterns, and interaction structures that platforms monetise, then governance must eventually confront the economic dimension of digital life. The documents suggest several routes, including disclosure around data-derived value, public interest funding mechanisms, collective structures for user bargaining, and support for digital commons infrastructure.

This is politically more difficult than access rights or portability standards, but it follows naturally from the same diagnosis. The issue is not only misuse. It is extraction. Once data is understood not simply as a privacy object but as an economic input, questions of fairness, compensation, redistribution, and institutional design move closer to the centre of policy debate.

The point is not that every user interaction should be individually priced and traded. That would collapse civic life into a marketplace. The stronger argument is that where digital ecosystems systematically derive value from user-generated activity, public policy should ensure that some of that value supports rights-preserving infrastructure, user power, and civic accountability.

Why this matters now

This debate can no longer be deferred.

AI systems are becoming more capable. Platform dependence is deepening. More of everyday life is mediated through digital records that individuals cannot fully access or control. Identity, reputation, memory, and even livelihood increasingly depend on systems governed by private entities whose incentives do not align neatly with the public interest.

That means the cost of delay is rising. Every year without strong portability, auditable transparency, user-aligned delegation, and interoperable rights makes the digital environment harder to reform. It increases concentration. It deepens dependence. It normalises asymmetry.

The attached documents do not solve every issue. No single framework could. But they do something rare and valuable. They connect moral argument, legal structure, and technical implementation. They show that digital sovereignty is not a utopian demand. It is a governable agenda.

The standard policymakers should adopt

Any serious digital rights regime should be judged by whether an ordinary person can do a few simple things without specialist help.

Can they see what a platform holds about them.

Can they move it somewhere useful.

Can they delete it and obtain proof.

Can they understand how it is being used, shared, or monetised.

Can they use protective tools that act in their interest without being blocked or punished.

That is the practical test. It is also the democratic test.

Digital sovereignty is not an abstract theory for policy conferences. It is the next layer of civil infrastructure. If rights such as property, mobility, due process, transparency, and bargaining power matter offline, they must be translated into the digital environment with equal seriousness.

The age of passive users should end here. The next phase of the internet should treat people not as temporary account holders inside rented systems, but as rights-bearing citizens of digital life.

PART II — THE DOCUMENTARY FRAMEWORK

Source documents, outlines and references Data ownership Documents

| Document name | Short description | Relevance |

| 03_Public_Manifesto_Digital_Sovereignty.docx | Public-facing manifesto that frames digital sovereignty as a civic cause rooted in ownership, freedom to leave platforms, and user protection. | Strong for public narrative, campaign language, and accessible framing. |

| 01_Policy_Paper_Digital_Sovereignty_Act.docx | Full policy paper setting out the three-tier rights model, platform duties, AI guardianship, and the broader legal and economic rationale. | Core analytical foundation for the article. |

| 02_Draft_Legislation_Digital_Sovereignty_Act.docx | Draft legal text with statutory provisions covering access, portability, deletion, enforcement, obligations, and penalties. | Shows how the principles can be translated into law. |

| THE DIGITAL SOVEREIGNTY ACT.docx | Expanded legislative draft with stronger institutional mechanisms, Personal Data Agents, collective rights, and public-interest funding structures. | Useful for the enforcement, institutional, and economic dimensions. |

| Policy Paper.docx | Policy-focused document on interoperability, context-sensitive portability, migration mechanisms, and AI-assisted rights enforcement. | Valuable for the cross-platform and international governance angle. |

| The Age of Fragmented Selves.docx | Conceptual and philosophical framing of digital identity as fragmented across platforms and in need of new sovereignty models. | Strong for narrative depth and intellectual framing. |

| BRD and SRS Digital Sovereignty Implementation Platform (DSIP).docx | Business and system requirements for implementing the framework through auditable workflows, APIs, dashboards, and compliance tooling. | Critical for demonstrating technical feasibility. |

| concept.docx | Strategy and synthesis document that sharpens risks, opportunities, positioning, and rollout logic. | Useful as a framing and consolidation layer. |

| a policy document that deals with our data living in various social media pl.docx | Early origin document outlining the core problem of fragmented platform-held data, weak user control, and the need for stronger rights. | Important as the original conceptual seed of the project. |

How Each Document Contributes to the Argument

The following documents do not repeat one another. Each occupies a distinct position in a chain that runs from conceptual origin to legislative text to technical specification. Read individually, each is a substantial contribution. Read together, they form an integrated programme for reform.

- Source Brief Original Problem Statement: The generative origin of the entire project. This document articulates the raw, first-person civic question: what happens to the data I have placed into social media platforms, and what rights should I have over it? It frames the three-tier structure personal contributions, interaction context, and portability as intuitive demands before they become legal categories. It also raises the forward-looking questions about AI, data reuse, deletion rights, legislative gaps across jurisdictions, and the role of a certified extraction mechanism. Every subsequent document is, in some sense, an answer to the questions posed here.

- The Case for Digital Sovereignty: The intellectual and rhetorical anchor of the framework. This essay makes the case that privacy alone is an insufficient frame, that digital sovereignty is a practical civic principle, and that a coherent architecture of ownership, portability, accountability, and public interest governance is both necessary and achievable. It contextualises the broader body of work and provides the argumentative spine for the policy and legislative documents that follow.

- Your Digital Life Is Not a Rental, It Is a Right: The bridging document between popular understanding and structural analysis. It translates the core argument for a general audience establishing that the platform economy is not a fair exchange but a power imbalance and then introduces the three-tier model, AI accountability, enforcement mechanisms, and the role of the Digital Sovereignty Implementation Platform. It serves as the most accessible entry point into the full framework.

- The Age of Fragmented Selves: A Policy Framework for Digital Sovereignty: The conceptual architecture document. Framed as a call for a new legal and social foundation, it articulates the three pillars of digital sovereignty the Personal Archive, the Social Context, and Interoperable Portability and redefines the relationship between users, platforms, and AI. It introduces the concept of platform stewardship (as distinct from ownership), and lays out the ethical and governance principles that underpin the legislative proposals.

- Public Manifesto Your Data. Your Rights. Your Future.: The mobilisation document. Written for broad public reach, this manifesto translates the policy argument into direct, accessible advocacy. It names the problem plainly, quantifies the value users generate, articulates three fundamental rights (Your Stuff Is Yours; You Can Leave Anytime; AI Must Work for You), and makes the case for why ordinary citizens should support reform. It is the campaigning face of the framework.

- Policy Paper Digital Sovereignty Act (01): The comprehensive policy architecture. This paper presents the full analytical and normative case for the Digital Sovereignty Act: the crisis of fragmented digital identity, the hidden value exchange in the data economy, the acceleration of AI risk and opportunity, and the three-tiered rights model in detail. It defines platform obligations, AI governance principles, enforcement mechanisms, and the institutional infrastructure required. It is the primary reference for policymakers and legislators.

- Policy Paper Digital Interaction Sovereignty, Portability, and Platform Accountability: The comparative and jurisdictional policy paper. This document maps the existing legislative landscape across the EU, UK, and US, identifies gaps in current portability and interoperability rights, and proposes a practical legislative and technical pathway compatible with existing frameworks. It introduces the interaction sovereignty concept, addresses the privacy-portability tension in Tier 2 data, and provides implementable definitions and technical standards grounded in current regulatory realities.

- Draft Legislation Digital Sovereignty Act of 2026 (02): The primary legislative instrument. This document translates the policy framework into statutory form: definitions, tiered user rights (access, export, deletion, reuse, portability, data dividends), platform obligations, AI system requirements, enforcement mechanisms, penalties, and transitional provisions. It provides the legal architecture that makes every other document in the set enforceable.

- The Digital Sovereignty Act Alternative Legislative Draft: A companion legislative draft with distinct emphases. This version sharpens several provisions — notably the data dividend mechanism, the Personal Data Agent certification regime, and the extraterritorial scope of the Act. Read alongside the primary draft, it surfaces the policy choices and trade-offs inherent in translating rights principles into enforceable statutory language.

- BRD and SRS Digital Sovereignty Implementation Platform (DSIP): The technical implementation specification. This document translates statutory rights into auditable software architecture: the user-side Personal Data Vault, the rights execution console, the platform-side export and migration APIs, the regulator-grade audit infrastructure, and the Personal Data Agent certification system. It is the engineering blueprint that ensures the legislation can be operationalised and enforced in practice.

Taken together, these nine documents form a complete reform programme: from the original civic question to intellectual argument, public advocacy, policy analysis, comparative law, primary and alternative legislation, and technical specification. The chain is deliberately complete. A movement for digital sovereignty requires each of these registers — the popular, the analytical, the legal, and the technical or it risks remaining at the level of aspiration.

PART III — CONCLUSION

From Aspiration to Architecture

The argument assembled across this body of work is neither utopian nor merely technical. It is a practical programme rooted in a clear diagnosis of a structural problem: that the people who generate the value of the digital economy do not control it, cannot easily leave it, and have no meaningful recourse when it works against their interests.

The three-tier model at the heart of the framework personal archive, interaction context, and interoperable portability is not an abstract construct. It reflects the actual shape of digital life as people experience it. Tier 1 is the content you consciously create. Tier 2 is the social context that gives your digital life meaning. Tier 3 is the freedom to move to leave a platform without leaving yourself behind. Each tier requires a distinct set of rights, obligations, and technical mechanisms. Together they constitute the minimum architecture of genuine digital sovereignty.

The Necessary Role of Artificial Intelligence

AI runs through this framework in two directions. In the current system, AI is primarily an instrument of platform power: it personalises feeds to maximise engagement, infers sensitive attributes from behavioural data, optimises pricing and content moderation at scale, and trains on user-generated material without meaningful consent or compensation. The framework confronts this directly.

But AI also represents the most powerful tool available to users seeking to exercise their rights. The Personal Data Agent a certified, user-controlled AI system that can audit terms of service, monitor data flows, automate export requests, and flag breaches is not a futuristic addition to the framework. It is a practical necessity. Without intelligent tooling, rights that exist on paper will remain inaccessible to most people. The legislation must therefore mandate both the restriction of AI-enabled exploitation and the enablement of AI-assisted self-defence.

What Genuine Reform Requires

The documents in this collection are clear that reform at the level of privacy tweaks or transparency notices is insufficient. What is required is a genuine rebalancing of power one that changes the terms on which platforms are permitted to operate, not merely how they are required to communicate.

This means, in practice:

- Statutory rights that are non-waivable, not buried in terms of service

- Platform obligations that are technically specified and independently auditable

- Data portability that is functional, not symbolic including service-to-service transfer, standard schemas, and import support

- Deletion rights that are verifiable, not performative with cryptographic proof and downstream purging

- AI governance that distinguishes between AI that serves the platform and AI that serves the user

- Enforcement mechanisms with teeth including meaningful penalties, a dedicated regulatory body, and a private right of action

- A Data Dividend framework that begins to return economic value to the people who generate it

None of this is impossible. Several jurisdictions have already established meaningful foundations the GDPR’s portability provisions, California’s consumer data rights, and emerging interoperability mandates in the EU Digital Markets Act. The framework presented here builds on these foundations and closes the gaps they leave open.

The Stakes of Inaction

The case for urgency is not rhetorical. The window for meaningful reform is narrowing. As AI capabilities accelerate, the value gap between platform and user will widen. As consolidation continues, the leverage of major platforms over regulators and legislators will increase. As users become ever more deeply embedded in platform ecosystems, the practical cost of exit — already high will rise further.

A generation is growing up whose entire social, creative, and professional lives are being built inside privately governed systems. If the legal and technical architecture of digital sovereignty is not established now before the ecosystem hardens further the opportunity for meaningful reform may pass. What is locked-in today shapes what is possible tomorrow.

A Coherent Framework, Ready to Act

What this body of work offers is not merely a set of arguments. It is a complete, interoperable set of instruments: the public case for why reform is necessary, the policy analysis that shows what reform should look like, the legislative text that makes reform enforceable, and the technical specification that makes enforcement operational. Each document is a starting point. Together they constitute a programme.

The digital realm has become the primary space of social life, economic participation, and public discourse for hundreds of millions of people. The principles that govern that space who owns what, who can move where, who is accountable to whom are not technical details. They are constitutional questions. They deserve constitutional-grade answers.

Digital sovereignty is that answer. The framework assembled here shows not only why it is necessary, but how it can be built.

“The digital realm is not a service we consume. It is a world we inhabit. Its governance must reflect that truth.”