“Your face, your voice, your body, and your behavioural signatures are not public property. No one has the right to copy you, wear you, or profit from you without your permission.”

PART I — PREAMBLE

The Problem That Privacy Law Cannot Solve

The deepfake debate is routinely misframed. It is treated as a problem of content moderation, platform safety, or AI ethics. Each of those frames captures a symptom. None captures the disease. The disease is the collapse of identity sovereignty the condition under which a person no longer controls their own face, voice, gestures, and public presence in digital space.

When a person’s likeness can be copied, remixed, and distributed at scale without their knowledge or consent, the question is no longer merely whether content is false. The question is whether individuals still have meaningful control over themselves. And if they do not, then digital sovereignty the broader project of asserting rights over one’s digital life is incomplete at its foundation. You cannot own your digital footprint if you do not own your digital face.

Existing legal frameworks are not equipped to address this. Privacy law concerns what others may collect about you. Data protection law concerns how that information is processed. Traditional publicity and intellectual property rights were designed for a world where replication required significant effort and distribution required physical infrastructure. None of them were built for a world in which a realistic replica of any person can be generated in minutes, distributed globally in hours, and experienced by millions as authentic.

That is the governance gap this body of work addresses. It is a gap that existing law does not close and that incremental platform policy will not fill. It requires a dedicated legal architecture, built from first principles, that treats identity integrity as a distinct and enforceable right.

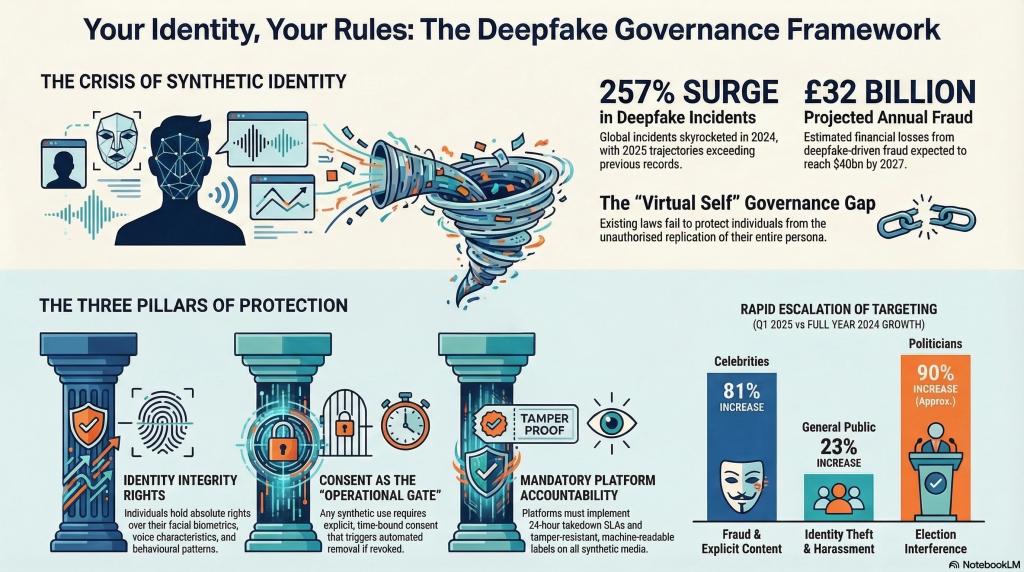

The Scale of the Threat Is No Longer Hypothetical

The urgency of this framework is grounded in evidence, not projection. Deepfake incidents increased by 257 percent in 2024. The first quarter of 2025 alone exceeded the full-year total for 2024 by 19 percent. Celebrity targeting rose by 81 percent in the same period. Projected annual financial losses from deepfake fraud in the United States are expected to reach $40 billion by 2027. More than 45 US states have enacted some form of deepfake legislation, yet a coherent federal framework does not exist, and cross-border enforcement remains largely theoretical.

But the numbers alone do not capture the nature of the harm. Deepfakes do not merely cause financial loss. They destroy reputations built over decades in hours. They fabricate statements that people never made, place individuals in situations they never entered, and generate intimate imagery without any consent. They are used to manipulate elections, commit executive fraud, silence journalists, and harass private individuals. The harms are qualitatively different from conventional fraud or defamation: they impersonate the victim using their own identity against them.

These are not edge cases. They are a rapidly expanding category of harm that affects ordinary people, public figures, corporate institutions, and democratic systems simultaneously. A framework that treats them as exceptional events will always be reactive and always be late.

Why Consent Is the Core of the Framework

The central organising principle of the framework assembled in these documents is consent. Not consent as a buried contractual clause in terms of service — but consent as the operational gate through which any identifiable synthetic likeness must pass before it is created, published, or distributed.

Consent under this framework must be explicit, informed, specific in scope, time-bound, and revocable. Revocation must not trigger a negotiation. It must trigger removal and suppression. This requirement is not merely aspirational. It is the mechanism by which the framework prevents harm rather than remedying it after the fact. Deepfake harms spread at algorithmic speed. If consent can be ignored until litigation concludes months or years later, consent is not a protection. It is a formality.

The consent architecture connects directly to platform obligations. Platforms that host, recommend, or algorithmically amplify synthetic content do not occupy a neutral position. Distribution creates responsibility. Detection, labelling, consent verification, rapid takedown, hash-based suppression, and evidence preservation are not optional features. They are enforceable duties. This is the line the framework draws, and it is one that no existing legal or regulatory regime draws with sufficient clarity.

Identity as Property and Human Dignity

Underlying the entire framework is a claim about the nature of identity itself. The documents assembled here assert that face, voice, body, and behavioural signature are not merely personal data in the technical sense. They are extensions of the person. They carry the same moral weight as physical bodily integrity, and they should carry comparable legal protection.

This means that the framework operates on two registers simultaneously. On the rights register, it establishes that identity belongs to the individual, that it cannot be appropriated without consent, and that its commercial use requires explicit licensing and fair compensation. On the dignity register, it recognises that synthetic impersonation is not a property tort alone it is an assault on a person’s standing in the world, their relationships, their voice, and their capacity to participate in public life.

The legislative architecture that follows from this dual foundation is comprehensive: identity integrity rights covering likeness, voice, and behavioural signatures; a copyright-adjacent intellectual property regime for synthetic identity; consent and licensing systems; platform obligations for detection and takedown; AI monitoring tools that serve the individual rather than the platform; cross-border enforcement mechanisms; and a tiered penalty structure designed to make non-compliance genuinely costly. This is the architecture the following documents build, section by section.

Identity Integrity in the Age of Deepfakes

The deepfake debate is not really about fake content. It is about stolen identity. Once a person’s face, voice, gestures, and public presence can be copied at scale, the real issue becomes control. Who owns your digital self, and who gets to profit from it? The answer will shape the next era of rights, regulation, and trust.

The deepfake debate is often framed as a problem of misinformation, platform safety, or AI ethics. That is too narrow. The deeper issue is identity. When a person’s face, voice, gestures, writing style, and public presence can be copied, remixed, and distributed without permission, the question is no longer just whether content is false. The question is whether a person still has control over themselves in digital space. Across the attached papers, a clear argument emerges: digital sovereignty means very little if identity sovereignty does not exist alongside it.

The documents make a strong case that synthetic media is not simply another content moderation challenge. It is a structural shift in how identity can be extracted, reproduced, and monetised. Existing frameworks for privacy, data protection, and even traditional intellectual property do not fully address this shift, because they were built for a world in which personal data was discrete and static, not one in which a whole human persona can be reconstructed and weaponised. That leaves a governance gap where platforms host synthetic replicas without strong consent systems, AI developers train on identifiable likenesses without robust permission structures, and victims face slow, fragmented, and often ineffective remedies.

That gap matters because the harms are no longer hypothetical. The stakeholder analysis in the uploaded materials points to a sharp increase in deepfake incidents, with 2024 seeing a 257 percent rise and Q1 2025 already exceeding the whole of 2024 by 19 percent. It also highlights projected annual deepfake fraud losses of $40 billion in the United States by 2027, alongside rising cases of political manipulation, executive fraud, sexual abuse imagery, and reputational attacks. These patterns show that deepfakes are not just celebrity problems or edge cases. They affect ordinary people, companies, media systems, and democratic institutions at once.

The central question is ownership of identity

The strongest idea running through the papers is simple: identity belongs to the person. The manifesto states this in direct terms. Your face, voice, body, and behavioural signatures are not public property. No one has the right to copy, wear, or profit from them without permission. This is the moral core of the framework, and it gives the rest of the legislative architecture a clear centre. It shifts policy away from vague platform goodwill and toward a rights-based model in which the individual is the primary rights holder, not the platform, not the model developer, and not the market.

From there, the documents build a practical legislative doctrine around consent. Consent is not treated as a buried clause in terms and conditions. It is the gate. It must be explicit, informed, specific, time bound, and revocable. Revocation must trigger removal and suppression, not endless negotiation. This matters because deepfake harms move faster than ordinary legal processes. If consent can be ignored until after harm spreads, then consent is not a real protection. Several of the papers therefore connect consent to operational duties such as consent receipts, scope validation, revocation workflows, and platform side verification before upload or generation in high risk contexts.

Identity is becoming a legal and economic asset

A major contribution of the policy papers is the move to treat identity as a protected intellectual asset, but without reducing personhood to ordinary property law. The proposed model treats likeness, voice, and behavioural signatures as a form of personal intellectual property, or a Personal Identity Asset. That makes them licensable, enforceable, and compensable, while still recognising that they are grounded in human dignity and cannot simply be treated like a transferable commodity. This is a useful middle path. It gives lawmakers a structure for commercial use, licensing, inheritance, and audit, while rejecting the idea that public visibility equals free raw material for AI training or synthetic reproduction.

That framing becomes especially important when the papers address AI generated likenesses as derivative works. The core legal argument is that if a synthetic replica is recognisably based on a real person, it should not be treated as a free floating new object detached from that person’s rights. It should require permission. This helps answer one of the hardest questions in current AI policy: who has standing when a model does not copy a single source file, but clearly reconstructs a person’s identity from many inputs. The proposed answer is that recognisable identity triggers rights, duties, and remedies. That gives legislators a more realistic way to govern synthetic likeness than relying on old copyright doctrines alone.

Platforms are not neutral in a synthetic media economy

The framework also avoids a common policy error, which is to treat platforms as neutral pipes. The documents argue the opposite. Distribution creates responsibility. If a platform hosts, recommends, labels poorly, or amplifies harmful synthetic content, it is part of the chain of harm. This shows up in repeated calls for visible and machine readable labels, consent verification, rapid takedown, suppression from search and recommendations, transparency reports, and independent audits. It also shows up in the insistence that algorithmic amplification is an enforceable duty, not merely a product feature outside legal scrutiny.

This is one of the strongest features of the package. Many regulatory efforts focus on content removal after the fact. These documents look one layer deeper. They ask whether platforms accelerated the spread, whether synthetic content influenced recommendations, whether it was reused for training, and whether reuploads were allowed to proliferate after an initial takedown. This is where the idea of suppression becomes more powerful than simple deletion. A harmful deepfake should not just disappear from one URL. It should be delisted, de amplified, hashed, tracked, and blocked from resurfacing through recommendation systems.

Law must be translated into systems, not slogans

Another strength is that the papers do not stop at moral principle. They move into systems design. The business and systems requirements outline sketches a modular enforcement platform with an Identity Vault and Consent Registry, an Identity Integrity Ledger, multimodal detection and labelling services, case management and evidence services, takedown and suppression orchestration, monitoring and alerting agents, licensing and compensation modules, training data compliance tools, and regulator reporting modules. In other words, the legislative vision is translated into software architecture. That matters because weak tech policy often fails at the handoff from statute to implementation. Here, the handoff is explicit.

The technical requirements also show a serious awareness of civil liberties and operational realism. The system design calls for encryption, immutable audit logs, biometric minimisation, on device matching where possible, reason codes, appeal support, and high availability for reporting and takedown endpoints. It also recognises hard constraints such as adversarial evasion, false positives, cross border conflict, and the practical limits of retroactive training data removal. That makes the proposal more credible. It is not pretending perfect detection exists. It is designing for accountable process, measurable duties, and continual adaptation.

Deepfakes are a systems problem, not a niche abuse category

The stakeholder analysis shows why this issue needs a whole of society response. Individuals face identity theft, fraud, humiliation, and loss of control. Celebrities and creators face false endorsements, brand dilution, and commercial exploitation. Politicians and election bodies face voter suppression, diplomatic incidents, and targeted disinformation. Companies face executive fraud, operational disruption, and market manipulation. Journalists face growing uncertainty over whether audio and video evidence can be trusted. Regulators face fragmented laws, jurisdictional gaps, and attribution problems. This is not a single sector policy problem. It is a systems problem that cuts across politics, economics, culture, and technical infrastructure.

The real world cases in the research brief make that concrete. The Arup fraud case shows how multi person deepfakes can defeat internal trust processes in companies. The Biden robocall shows how cloned audio can be used for election interference. The Taylor Swift case shows the scale and gendered nature of sexualised deepfake abuse. The Varoufakis example shows a more complex reputational attack, where synthetic content can mimic a public figure closely enough to remain credible before inserting damaging falsehoods. Taken together, these cases show that the problem is not just fake media. It is the collapsing cost of personalised deception.

AI must become part of the defence layer

One of the most original parts of the package is its insistence that AI must also be a guardian, not only a generator. The policy papers repeatedly argue that the same computational power used to synthesise a likeness can be used to detect anomalies, monitor unauthorised uses, verify provenance, automate takedowns, manage permissions, and help individuals defend narrative integrity. This matters because many policy discussions assume a simple fight between law and technology. These documents instead imagine law, standards, and protective AI working together. That opens the way for personal detection agents, consent and licensing systems, authenticity certification, and user controlled identity vaults.

The implementation plan strengthens the package further by showing how to govern rollout. It proposes governance bodies, named roles, phased delivery, technical standards boards, civil society and creator advisory panels, and an Alternate Media Support Council. That last point matters. Verification should not become a privilege of large incumbents alone. If compliance tooling is too expensive, the result will be a two tier information order where large platforms can certify truth and smaller outlets are left exposed. The plan addresses this by proposing subsidised verification tooling, training, grants, safe harbour guidance, and support for local, diaspora, and community publishers.

That concern about unequal capacity also shows why the incentives package is useful. The enforcement paper proposes not just penalties but a full compliance ecosystem. It includes regulator oversight, audit access, public compliance scoring, reduced audit frequency for strong performers, grants for open safety infrastructure, shared services for smaller platforms, and lawful licensing channels for legitimate commercial use. This is smart policy design. Punishment matters, but adoption improves when smaller actors get practical infrastructure instead of impossible mandates.

Rights without enforcement are symbolic

At the same time, the framework is clear that repeat harm must become expensive. Revenue linked fines of up to 4 percent of global annual turnover, per day penalties, corrective orders, algorithmic restrictions, service restrictions, civil compensation, and criminal referrals for egregious abuse all create a meaningful penalty ladder. This matters because platforms and tool providers respond to incentives. If identity harm remains cheaper than compliance, the market will continue to tolerate abuse. The documents recognise that reality and try to change the cost structure.

The package also does a good job handling predictable objections. The strategic response documents argue that the bill should target harmful impersonation and non consensual identity use, not synthetic media in general. Satire, critique, art, and journalism remain possible, but labelling remains the minimum standard and malicious impersonation is not shielded as free expression. The plan also answers concerns about burden on small platforms by proposing tiered obligations and certified shared services. That is a stronger position than either absolutism. It does not ban synthetic media, and it does not leave everyone to self regulate. It defines a boundary between lawful expression and identity abuse.

The real destination is a new digital settlement

The most ambitious part of the materials is the cross border vision. Several papers propose mutual recognition, shared registries, evidence standards, arbitration pathways, and even a Convention on Digital Identity Integrity. That ambition is justified. Deepfakes ignore national borders. A cloned voice can be generated in one country, hosted in another, targeted at victims in a third, and monetised through a fourth. Domestic law still matters, because distribution can be regulated where it occurs, but long term effectiveness will require international standards for consent, provenance, evidence exchange, and remedy.

What makes this whole set of documents work is that it does not treat identity protection as nostalgia or fear of innovation. It treats it as market design and democratic infrastructure. A future with deepfakes does not have to become a future without trust. But that outcome will not arrive on its own. It requires law that recognises personal identity as a protected asset, platforms as accountable intermediaries, AI as both risk and remedy, and enforcement as an operational system rather than a symbolic promise.

The attached papers therefore amount to more than a warning. They outline a legislative and technical blueprint for a new digital settlement. Its core message is clear and worth repeating because it is both legally sharp and publicly legible: your face, your voice, your identity, your rules. In an age of synthetic media, that may become one of the defining rights claims of the digital era.

PART II — THE DOCUMENTARY FRAMEWORK

The Role of Each Document in the Programme: Deepfake policy and research

The documents in this collection are not redundant variations on a single argument. Each occupies a distinct and necessary role in a chain that runs from moral assertion to public mobilisation, from policy analysis to legislative text, from enforcement design to technical specification and strategic execution. The chain is complete by design. A reform programme for identity integrity requires every link.

1. Identity Integrity in the Age of Deepfakes

The intellectual anchor and synthesis document. This essay establishes the central argument: that digital sovereignty is incomplete without identity sovereignty, and that the governance gap left by existing privacy, data protection, and intellectual property law requires a dedicated framework. It contextualises the statistical evidence, introduces the consent-centred architecture, explains why AI must be positioned as a tool of individual protection rather than platform exploitation, and frames the full body of work as a coherent and actionable programme. It is the document that gives every other piece its purpose and direction.

2. The Identity Integrity Manifesto

The moral and civic foundation of the framework. The Manifesto states, in direct and unambiguous terms, the nine principles on which the entire legislative architecture rests: identity belongs to the person; consent is the gate; every synthetic artefact must carry a label; speed matters; distribution creates responsibility; evidence must be preserved; repeat harm must become expensive; truth needs tools; and cross-border harm requires cross-border enforcement. It does not argue the case — it asserts the standard. The Manifesto functions as the benchmark against which every policy choice, legislative clause, and technical specification in the other documents is measured.

3. Deepfake Governance Act — Policy Paper (Primary)

The comprehensive policy architecture. This paper presents the full analytical and normative case for the Deepfake Governance Act: the crisis of synthetic identity; the failure of existing frameworks; the four-dimensional governance model covering likeness rights, intellectual property, platform obligations, and AI governance; and the detailed rights structure for individuals, including the right to consent, revoke, license, and receive compensation. It defines the scope of covered harms, sets out the regulatory principles, and provides the analytical foundation for the draft legislation. It is the primary reference for policymakers, legislators, and institutional stakeholders.

4. Deepfake Governance Act — Policy Paper (Variant)

A parallel policy paper with complementary emphasis. Where the primary paper foregrounds the rights architecture, this variant foregrounds the IP-adjacent framework for synthetic identity, the commercial licensing model, and the mechanics of AI as a protective instrument. Read together, the two policy papers provide a fuller picture of the design choices embedded in the framework and surface the trade-offs that legislators will need to resolve in drafting.

5. Policy Paper — Digital Identity Integrity and Synthetic Media Governance

The cross-cutting governance analysis. This paper situates the identity integrity framework within the broader landscape of digital rights, explains why synthetic media governance must operate in parallel to rather than within data portability and privacy regimes, and maps the specific governance gap that the Deepfake Governance Act is designed to fill. It introduces the concept of identity as a copyright-adjacent asset, addresses cross-border distribution challenges, and proposes AI-enabled consent management tools as a structural component of the framework.

6. Deepfake Stakeholder Impact Analysis

The evidentiary foundation. This research brief and PESTLE analysis provides the factual scaffolding for the entire framework: the 257 percent rise in deepfake incidents; the $40 billion projected annual fraud losses; the 81 percent increase in celebrity targeting; the fragmented legislative landscape across more than 45 US states; and the documented gaps in legal ownership and control mechanisms. It traces the impacts across individuals, corporations, media institutions, and democratic systems, and demonstrates through real-world cases that deepfake governance is not a future problem. It is a present emergency. Every quantitative claim in the other documents traces back to the analysis here.

7. Enforcement, Penalties, Arbitration, and Incentives

The enforcement architecture. This specialist section provides the detailed statutory machinery for making the framework operational: the competent authority structure and regulator powers; the scope of platform duties including detection, labelling, consent verification, and takedown service levels; the AI developer obligations covering training data provenance and consent-based controls; the penalty regime including revenue-linked fines, per-day penalties, and compensation requirements; and the arbitration and dispute resolution mechanisms. Without this document, the rights framework has no teeth. With it, non-compliance becomes genuinely costly and accountability becomes auditable.

8. Strategic Response to Pushback

The political and legislative resilience document. This paper anticipates and rebuts the most likely arguments against the framework, organised by stakeholder: legislators and government bodies raising free speech, enforceability, and cost concerns; platforms citing detection reliability and operational burden; AI developers invoking research freedom; civil liberties groups challenging labelling and consent requirements; and international partners questioning jurisdictional scope. For each objection it provides a practical rebuttal that preserves the integrity of the framework while demonstrating workability. It is the document that prepares advocates, legislators, and regulators for the consultation process.

9. Strategic Implementation Plan

The delivery blueprint. This plan maps the path from policy intent to enforceable legislation to real-world operation. It defines workstreams, governance roles, deliverables, milestones, consultation mechanisms, and success measures including median time to action for verified claims, re-upload rates after suppression, label coverage rates, and victim satisfaction scores. It also addresses the specific challenge of ensuring that verification tooling and compliance infrastructure are accessible to community and alternative media, not only major platforms. Without this document, the framework remains a set of intentions. With it, implementation becomes plannable, measurable, and accountable.

10. BRS and SRS — Deepfake and Synthetic Media Governance Implementation Platform

The technical specification. This document translates the statutory framework into an operational and technical architecture: the detection, labelling, consent verification, and takedown workflows; the consent receipt and revocation automation systems; the evidence preservation and chain-of-custody infrastructure; the cross-platform hash registry and suppression mechanisms; the training data provenance logging and audit interfaces; the compensation and licensing workflows; and the regulator-grade compliance scoring and reporting systems. It is the engineering counterpart to the legislative text the specification that ensures the Act can be not merely passed but enforced in practice.

Taken together, these ten documents span every register that a serious reform programme requires: the moral assertion, the public manifesto, the evidentiary foundation, the policy architecture, the governance analysis, the legislative enforcement design, the political resilience strategy, the implementation roadmap, and the technical specification. Each is a starting point. Together they constitute an integrated and actionable programme for the governance of synthetic identity.

Document summary table see : Deepfake policy and research

| Document name | Short description | Relevance |

| The Identity Integrity Manifesto.docx | A concise statement of first principles for synthetic media governance, centred on consent, labelling, fast remedy, evidence preservation, platform responsibility, and cross border enforcement. | This is the moral and political core of the full package. |

| Deepfake_Governance_Act_Policy_Paper .docx | A full policy paper setting out the crisis analysis, governance pillars, platform duties, consent rules, and AI based protection systems. | This is the main legislative blueprint. |

| Deepfake_Governance_Act_Policy_Paper (1).docx | A parallel version of the main policy paper with the same broad focus on identity rights, IP protection, platform accountability, and AI guardianship. | Useful as a supporting or alternative draft of the flagship paper. |

| Policy Paper Digital Identity Integrity and Synthetic Media Governance.docx | A rights based and technically grounded paper introducing Personal Identity Assets, Personal Identity Rights, identity ledgers, identity vaults, audits, and treaty level coordination. | Especially valuable for connecting law, systems design, and international governance. |

| Deepfake_Stakeholder_Impact_Analysis.docx | A research brief covering real world cases, stakeholder impacts, PESTLE analysis, legal fragmentation, and broader societal implications. | This provides the evidence base and urgency case for regulation. |

| BRS and SRS outline for Deepfake and Synthetic Media Governance Implementation.docx | A business and systems requirements outline translating policy into a platform with consent, detection, evidence, suppression, licensing, and regulator reporting modules. | This is the implementation bridge between law and software. |

| Enforcement Penalties Arbitration and Incentives.docx | A detailed enforcement addendum covering regulator powers, duties, penalties, civil remedies, criminal thresholds, arbitration, and incentives. | This gives the framework enforcement strength and practical leverage. |

| Strategic Implementation Plan.docx | A delivery plan covering governance bodies, workstreams, milestones, consultation structures, alternate media support, and phased rollout. | Essential for turning policy into an operational programme. |

| Strategic response to push back.docx | A consultation and advocacy support paper addressing objections around free expression, technical feasibility, burden on smaller actors, and implementation cost. | Highly relevant for committee scrutiny, public debate, and coalition building. |

PART III — CONCLUSION

The Stakes of Letting This Moment Pass

Identity has always been the foundation of legal personhood. It is how individuals are recognised by institutions, trusted by communities, and protected by law. For most of human history, identity was difficult to replicate. Its physical anchors face, voice, gesture, presence required proximity and effort to copy. That constraint was informal but powerful. It meant that identity theft, impersonation, and fraud, while possible, were bounded by practical limits.

Those limits no longer exist. The technology to replicate any person’s face, voice, mannerisms, and communicative style is widely available, improving rapidly, and increasingly accessible to non-specialists. The distribution infrastructure to reach millions of people with a synthetic replica already exists and operates at algorithmic speed. The legal and institutional infrastructure to prevent, detect, and remedy synthetic impersonation does not yet match this reality.

That gap will not close on its own. Platforms have structural incentives to prioritise engagement over safety. AI developers have commercial incentives to train on the richest available datasets, which include vast quantities of identifiable likeness. Bad actors have every incentive to exploit a system in which harms spread faster than remedies. The gap closes only through deliberate, comprehensive, and enforceable governance.

What This Framework Establishes

The body of work assembled here proposes a governance framework that is simultaneously rights-based, technically grounded, and operationally specific. Its core commitments are not radical. They follow from principles that democratic societies already accept in other domains: that persons have rights over their own bodies; that those rights do not disappear when the body is represented in digital form; that commercial exploitation requires consent and fair compensation; and that the distribution of harmful content creates responsibility for the distributor, not only the originator.

Applied to synthetic identity, these principles produce a framework with five structural pillars:

- Identity integrity rights that are explicit, enforceable, and non-waivable, covering face, voice, behavioural signatures, and stylistic identity across all synthetic media contexts

- A consent architecture in which identifiable likeness cannot be used without explicit, specific, time-bound, and revocable authorisation, with revocation triggering immediate operational response

- Platform obligations that are technically specified and independently auditable, including detection, labelling, consent verification, rapid takedown, suppression, evidence preservation, and transparency reporting

- AI governance provisions that distinguish between AI deployed against individuals and AI deployed in their defence, with mandatory provenance logging, training data consent controls, and certified individual monitoring agents

- An enforcement and penalty structure that makes non-compliance genuinely costly through revenue-linked fines, per-day penalties, compensation requirements, and a tiered regulatory response calibrated to harm severity and platform reach

The Relationship Between Speed and Justice

One of the most important insights running through this framework is that the pace of harm and the pace of remedy are structurally mismatched under existing systems. A deepfake video can reach ten million viewers before a takedown request is processed. A false statement attributed to a political candidate can alter an election before a correction is published. A synthetic intimate image can destroy a private individual’s relationships before any court has jurisdiction to act.

This asymmetry is not an accident. It is the predictable result of building remediation systems around litigation timescales in a distribution environment that operates at millisecond timescales. The framework addresses this directly: mandatory service levels for verified identity claims; operational suppression systems that act before full adjudication; hash-based re-upload prevention that does not require repeated complaints; and AI monitoring agents that can detect and report violations continuously rather than retrospectively.

The goal is not to eliminate process. It is to ensure that the speed of protection can match the speed of harm. That requires both legal obligations and technical infrastructure, and the framework specifies both.

The Window for Action

The case for urgency is not rhetorical. The technological trajectory is clear: synthetic media capabilities will continue to improve, costs will continue to fall, and access will continue to broaden. The question is not whether deepfake harms will become more severe and more common. They will. The question is whether governance frameworks are in place before the ecosystem hardens further.

A generation of political, commercial, and social infrastructure is being built in an environment where synthetic identity goes ungoverned. Every year without a comprehensive framework is a year in which norms calcify, platforms optimise for engagement over safety, AI systems train on unprotected likenesses, and victims discover that their remedies are slow, fragmented, and often ineffective. The cost of that delay is not abstract. It is measured in careers destroyed, elections distorted, relationships broken, and trust in media and institutions further eroded.

Several jurisdictions have begun to move. The EU AI Act addresses some synthetic media risks. More than 45 US states have enacted partial deepfake legislation. The UK Online Safety Act creates some relevant platform duties. But partial, fragmented, and non-interoperable measures do not constitute a governance framework for harms that are structurally global. They create a patchwork that sophisticated actors navigate with ease and that victims cannot reliably use.

What is needed is a coherent, comprehensive, and enforceable framework that treats identity integrity as a first-order right, not a footnote in existing privacy or IP regimes. The documents assembled here provide that framework — from moral principle to technical specification, from public manifesto to legislative text, from enforcement architecture to implementation roadmap.

The Integrity of the Future Self

Every person who participates in digital life leaves a trail of likeness: photographs, videos, voice recordings, written expression, behavioural patterns. That trail is the raw material from which synthetic replicas are built. Managing it, protecting it, and asserting rights over it is not a niche concern for celebrities and public figures. It is a universal condition of modern life.

The framework presented here does not seek to prevent synthetic media. It seeks to govern it: to ensure that creativity, satire, journalism, and artistic expression remain possible, while ensuring that impersonation, exploitation, harassment, and fraud do not. That balance requires clear rules, operational systems, and genuine enforcement — not good intentions and voluntary guidelines.

Identity is the most fundamental asset a person possesses. It precedes property. It underpins every other right. When it can be reproduced without consent, distributed without accountability, and weaponised without remedy, the foundations of individual autonomy are not merely inconvenienced. They are structurally undermined.

This framework is the architecture of their defence. The documents assembled here show not only why that defence is necessary, but precisely how it can be built.

“If data is the currency of the digital age, identity is its sovereign expression. It must be protected not merely as personal information, but as a form of intellectual property and human dignity.”