Preamble

Why the future of work is not simply a choice between humans and robots it is about designing the operating system that lets them work together.

There is a question that has followed the robotics industry since the beginning: What happens when a skilled person can genuinely be somewhere not through a video feed, not through a joystick, but through a machine that translates presence, sensation, and agency across continents? When we started asking that question in earnest, the answers surprised us. Not because people doubted the technology, but because so many of the building blocks already exist. A defence contractor in Bristol working on haptic demining. A care home consortium in Osaka testing telepresence robots. A logistics firm in Rotterdam experimenting with remote yard operation. All of them facing the same challenge: how do you take these scattered pieces and assemble something that scales, that regulators will accept, and that operators will actually trust?

The Intelligence Between the Robots

| The market does not need a binary choice between full autonomy and manual control. It needs the bridge — and that bridge is becoming a platform. |

There is a question that has been sitting at the back of my mind for a while now, and I suspect it has been sitting at the back of yours too, if you have been watching the robotics and drone space closely. What actually happens when you put on a haptic suit, pull on a VR headset, and step virtually into the body of a robot on the other side of the world? Not in a demo reel. Not in a science fiction pitch deck. Right now, today, in an offshore oil facility, a contaminated factory floor, or a care home in Japan where there are simply not enough nurses.

That question deceptively simple, operationally profound is what the Progressive Autonomy Ecosystem is designed to answer. This piece is your deep dive into why it matters, where it stands today, where it is heading, and what the real risks are. Because there are several, and we will get to them. But first, the context. We are living through a genuine collision of technologies, and the implications have not been fully worked out.

Supporting Artefacts

The following documents accompany this issue. All artefacts are accessible via the shared folder: Google Drive Artefacts Folder

| File name | Description |

| haptic_teleoperation_analysis.docx | Analysis of haptic teleoperation and remote embodiment: system concept, benefits, constraints (latency, safety), and how human dexterity is projected into hazardous sites. |

| Implementation pathway considerations.docx | Notes on practical implementation pathways: phased rollout, prerequisites, stakeholder adoption, and real-world constraints for deploying remote robotic operations. |

| Progressive_Autonomy_Ecosystem_Master_Document.docx | Master document describing the Progressive Autonomy Ecosystem: integrated remote embodiment, hybrid autonomy, digital twin integration, and the end-to-end ecosystem architecture. |

| Robot and Drone Formats and Use Cases.docx | Catalogue of robot and drone formats with use cases (inspection, manipulation, situational awareness) and matched platforms to mission environments. |

| Vision Document.docx | Vision for integrated remote embodiment and progressive autonomy: narrative, goals, target outcomes, and the future-state operating concept. |

| Assessment and Implementation Framework.docx | Master framework describing the Progressive Autonomy Ecosystem: integrated remote embodiment, hybrid autonomy, digital twin integration, and end-to-end architecture. |

| Develop a comprehensive vision document using the established persona and playbook.docx | Vision for integrated remote embodiment and progressive autonomy: narrative, goals, target outcomes, and future-state operating concept. |

| Five lives in the loop | Fictional case studies illustrating use cases across sectors. |

The Orchestration Imperative

Let’s start with a confession: the industry has been asking the wrong question for years. The robotics debate has been framed as a binary full autonomy versus manual control. Boston Dynamics versus joysticks. Teslabots versus teleoperation. The implication is that autonomy is the destination and everything else is just a waypoint. But that framing misses something fundamental.

The real world does not do binaries. It does offshore platforms where connectivity drops for 200 milliseconds and a multi-million-pound asset is hanging in the balance. It does care homes where an elderly woman needs help at 3am but would be alarmed by a fully autonomous machine she has never seen and does not understand. It does bomb disposal where a robot must probe gently, feel resistance, and make a judgment call that no AI system today is legally certified to make. These are not edge cases. They are the majority of high-value, high-risk, high-judgment work.

The Problem Nobody Talks About

The mainstream robotics conversation collapses into a familiar binary: either we are building fully autonomous robots that handle everything themselves, or we are stuck with clunky remote controls and humans doing the dangerous work in person. That is a false choice, and it costs industries and people considerably.

Right now, billions of dollars are lost annually because skilled labour cannot be physically present in hazardous, remote, or geographically constrained environments. An offshore pipeline needs inspection. A contaminated site needs assessment. An elderly person in rural Japan needs help getting out of bed at 3am. A bomb disposal team is staring at an IED with no equipment capable of the precision the task demands.

| Fully autonomous robots as impressive as they are in controlled demonstrations still struggle when the environment gets messy, novel, or morally complex. That describes most of the real world. |

At the same time, pure teleoperation has existed for decades. But it has always felt disconnected. You are staring at a screen, moving a joystick, with no real sense of what the machine is experiencing. No touch. No resistance. No feel. That is the gap. And that gap is the opportunity.

What Is the Progressive Autonomy Ecosystem?

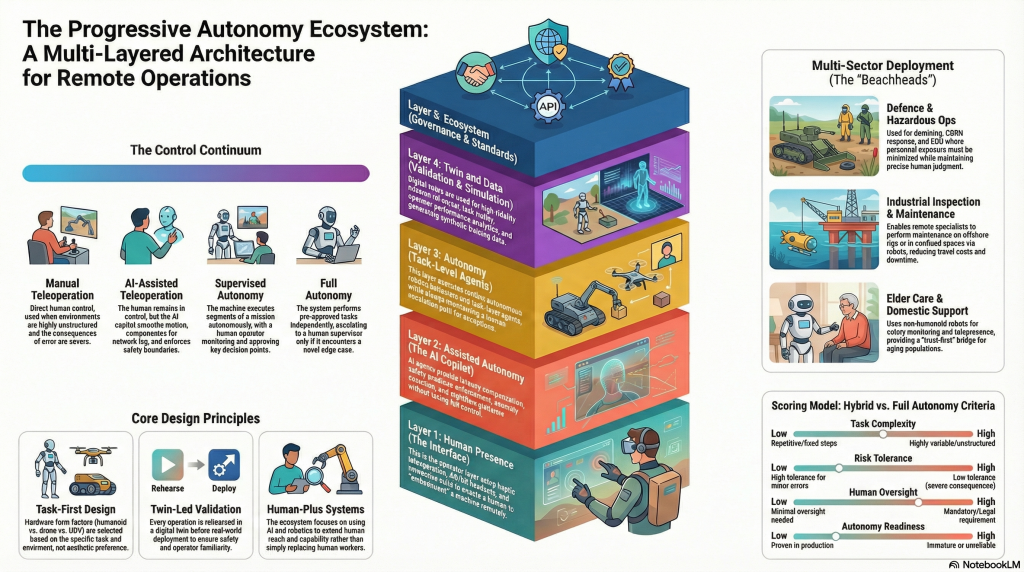

Think of it as five layers working together. Not a single product. Not a single robot. A connected operating model that flexes between full human control and increasing machine autonomy depending on what the task, the risk level, and the regulatory environment demand.

| It is the orchestration layer the connective tissue that lets humans and machines work together with intelligence. |

The five layers are:

- Human Presence Layer — haptic teleoperation, VR and AR interfaces, the sensation of being physically present at a remote site.

- Assisted Autonomy Layer — AI copilots that compensate for latency, enforce safety envelopes, and flag what human operators miss.

- Autonomy Layer — task-level machine execution for routine, repeatable work.

- Digital Twin and Data Layer — simulation, training environments, incident replay, and the institutional memory of operations.

- Ecosystem Layer — standards, certification frameworks, partner APIs, and an operator marketplace that turns isolated components into a functioning network.

At the base is the Human Presence Layer. This is where haptic teleoperation lives. The operator wears a haptic exosuit or gloves and a VR or AR headset, receiving genuine bidirectional feedback from the remote machine — pressure, resistance, temperature. The operator is not just watching a feed. In a meaningful sense, they are there.

Then comes the Assisted Autonomy Layer. This is not the AI taking over. This is the AI filling in the gaps that humans cannot compensate for on their own. Latency compensation absorbs the 50 to 150 millisecond round-trip delay before it causes a manipulation error. Safety envelope enforcement prevents the machine from crushing what it should not. Anomaly detection flags what the operator may have missed. The AI is a co-pilot. The human decides; the AI compensates.

Above that sits a selective Autonomy Layer. Task-level autonomous behaviours handle the routine and repeatable parts of a mission: navigate to waypoint, conduct a corridor scan, log sensor readings. Autonomy takes the rote work; humans keep the judgment calls.

The Digital Twin and Data Layer ensures operators are never entering an environment cold. Before anyone touches a real site, they train in a photorealistic digital replica of it. The care worker about to assist a patient in Osaka has already completed a simulated shift in a replica of that apartment. The offshore inspector knows the rig’s layout before setting foot on it. When incidents occur, the twin replays them for root cause analysis. This is where institutional memory is built.

Finally, the Ecosystem Layer: standards, partner APIs, certification frameworks, and a marketplace of certified operators available for global deployment. This is where the platform stops being a product and becomes a network.

Where Does This Actually Work?

Defence and Hazardous Operations

Start with demining. A robot with a disposable probe arm enters a minefield, works systematically, maps the telemetry, and if something detonates, you lose the probe. Not the operator. Not a human life. The haptic-equipped operator, working safely from a control centre, has felt the ground through the robot’s sensors and made judgment calls that no autonomous system is yet trusted or legally permitted to make in that context. Extend that to CBRN response, offshore platform maintenance, confined space inspection, and EOD. In each case the value proposition is direct: zero personnel exposure, full expert judgment.

Industrial and Infrastructure

Offshore inspection today involves helicopters, divers, permits to work, and travel costs that run to tens of thousands of pounds per visit. A drone operated by a haptic-equipped specialist can handle much of that work at a fraction of the cost. A pipeline crawler can reach places no human can access, operated by a specialist three thousand miles away who has already reviewed the digital twin of the asset before starting the shift.

Elder Care

This is the application that gives people pause. Japan is the obvious example: one of the oldest populations on Earth, chronic care worker shortages, and housing stock not designed for robot interaction. The model involves training care workers remotely using digital twins of individual homes, then deploying telepresence robots — and eventually light humanoids — that the remote carer operates with haptic feedback, language translation, and cultural context embedded in their preparation.

Is this a perfect solution? No. Privacy concerns are real and non-trivial. The question of what areas of a home a remote operator can see is not a minor technical detail. Cultural acceptance dynamics differ substantially between Japan, Germany, the UK, and the United States. But as a bridge — as a way of extending care access while autonomous systems mature and regulation catches up — it may be one of the most consequential deployments of this technology.

Remote Labour and the Skills Question

Here is the application that makes pure technologists uncomfortable but that economists grasp immediately. If you have a skill — operating a forklift or a crane, wiring industrial equipment, conducting specialist inspections — and that skill is needed in London or Tokyo, but you are based in Lagos or Manila, and a certified robot platform exists in that facility that you can safely and precisely control from a remote centre, what does that mean for global labour markets?

| Part-time remote robot operators. Retirees with decades of specialist knowledge working flexible hours from home control centres. Workers with disabilities who could not be physically present now deployed in roles where physical presence was the only barrier. The social implications are as substantial as the technical ones. |

Progressive Autonomy vs. Full Autonomy

It is worth being direct here, because this is where the real debate lives.

Full autonomy — the Boston Dynamics, Tesla Optimus, Agility Robotics Digit vision — will absolutely win in bounded, repetitive, high-volume tasks. Warehouse corridor navigation. Perimeter patrol. Fixed-route deliveries in controlled environments. Where the task is well-defined, the environment predictable, and variation is low, the autonomous system will, over time, beat the hybrid model on unit economics. It runs continuously, does not fatigue, and does not need a co-operator.

| Dimension | Progressive Autonomy | Full Autonomy Strategy |

| Deploy readiness | Operational now; human judgment covers AI gaps | 3 to 7 years for unstructured environments |

| Edge case handling | Human intervenes; AI assists | Fails or requires extensive manual coding |

| Regulatory fit | Aligns with current oversight requirements | Ahead of regulatory frameworks in most sectors |

| Workforce narrative | Augmentation framing; lower resistance | Displacement framing; higher resistance |

| Unit economics at scale | Higher than full autonomy today | Wins once reliable in bounded, repetitive tasks |

| Liability clarity | Clear control authority with full audit log | Legally ambiguous under current frameworks |

| Platform moat | OEM-agnostic orchestration layer | Often a single-vendor closed stack |

The progressive model performs best where it matters most right now: complex, unstructured, high-risk, regulation-heavy environments where waiting for perfect autonomy is not an option. And critically, it is not in competition with full autonomy. It is the pathway to it. You build trust, accumulate data, refine the AI copilot, and progressively transfer tasks to the machine as evidence, regulation, and public trust support the handoff.

The Honest Assessment: What Could Go Wrong?

Any serious analysis has to include this. The risks are real enough to have sunk platforms with better fundamentals.

| Technical | Latency is the core physics problem. The speed of light sets a hard ceiling. At 50 to 150ms round-trip, haptic teleoperation is workable for most tasks. Beyond 200ms, precision manipulation becomes genuinely difficult. Edge compute helps; AI latency compensation helps more. But this is not fully solved, and it will not be solved by software alone. Geography and infrastructure still constrain what is possible. |

| Human Factors | Haptic fatigue is real. Operators wearing exosuits for extended shifts experience musculoskeletal strain. The cognitive load of simultaneous haptic control and visual monitoring is significant. Published research on safe operating durations is thin. The ecosystem’s commercial case rests on safe, scalable human performance, and the human factors science has not kept pace with the hardware. |

| Regulatory | BVLOS drone regulation is evolving, and slowly. Remote operator robots in care settings are almost entirely ungoverned. Defence applications carry export control risk. Each jurisdiction runs on its own timeline, its own framework, its own political appetite. A platform that works in the UK may face an 18-month regulatory review before it can operate in Germany, Japan, or the United States. |

| Adoption | Workforce acceptance risk is consistently underestimated. A full-autonomy narrative triggers displacement anxiety. A remote embodiment narrative can trigger surveillance anxiety, particularly in domestic and care settings. Who is watching? Can the operator see inside my home? What happens to that footage? Trust is not given. It has to be designed in, earned over time, and governed visibly. |

| Commercial | Procurement cycles in industrial and defence sectors average 18 to 36 months. Healthcare procurement runs longer. A platform that is technically ready but commercially mis-positioned, approaching the wrong budget holder or using the wrong commercial model, can stall indefinitely. The difference between capex and opex framing, innovation budget versus maintenance budget, can determine whether a deal closes or collapses. |

| Competitive | Boston Dynamics, DJI, Agility Robotics, and Apptronik are all building toward greater autonomy. But the more credible threat comes from big-tech platform players. Amazon, Google, and Microsoft could enter the orchestration layer with scale advantages no startup can match. The defensible moat must be built in data, certification frameworks, operator networks, and domain-specific AI, not in hardware that can be commoditised. |

Research Frontiers: Open Questions

For those building in this space, these are the questions without good answers yet:

- What is the optimal human-to-machine ratio for supervised autonomy at scale? Research exists for air traffic control and nuclear operations, but not for robot fleet supervision in unstructured environments.

- How does haptic latency tolerance vary by task type and operator skill level? Current thresholds are engineering estimates. Clinical-grade data on manipulation accuracy versus latency is sparse.

- Can digital twin pre-training genuinely substitute for physical environment experience, and if so, to what extent and for which task categories?

- What are the cognitive load signatures of mode-switching — moving between teleoperation, AI-assisted operation, and supervised autonomy — and how do you detect operator overload before it produces error?

- How do different cultures respond to remote embodiment in care settings? Japan is instructive but not generalisable. Nordic countries, the Gulf states, and Sub-Saharan Africa will have different adoption dynamics entirely.

- What is the minimum viable network quality of service for each task class, and can LEO satellite connectivity deliver it consistently enough for surgical-precision teleoperation?

- What does the operator labour market look like at scale? Is certified remote robot operation a sustainable professional category, or does it commoditise into a low-wage gig role?

The Economics: Where the Money Lands First

Offshore inspection is the clearest near-term case. A single specialist helicopter visit to a North Sea platform costs between £15,000 and £40,000 when you account for aviation, accommodation, permits, and specialist time. A remote inspection using a drone and an operator trained in the platform’s digital twin can replace a substantial fraction of those visits. At ten visits per asset per year across a fleet of a hundred assets, the arithmetic is not complicated.

Confined space entry is comparably compelling. Every human entry requires a permit to work, a standby team, breathing apparatus, and liability exposure. A robot replaces the entry. The cost is operating time and the platform fee.

| The business model that works here is not hardware sales. It is Platform-as-a-Service for the software and orchestration layer, Robot-as-a-Service for the hardware fleet, and an operator marketplace that takes commission on certified remote deployments. Three revenue streams, a compounding data asset, and switching costs that increase as twin models deepen. |

Elder care economics run on a longer cycle — 24 to 36 months to first commercial contracts — but the addressable market is enormous. Japan alone has a care workforce shortfall measured in hundreds of thousands. At modest per-hour rates for remote care operator time, the market potential runs into the billions.

The Bigger Picture

What keeps coming back, underneath all the market analysis and technical detail, is a simpler frame.

The debate about robotics tends to focus on machines — what they can do, what they cannot, when they will be ready, who is winning the race. But the more useful frame is human capability extension. What becomes possible when a skilled person in Manchester can be present in a mine in South Africa, a hospital in Tokyo, or a disaster zone in Turkey — not on a video call, but through a machine that gives them genuine presence, sensation, and agency?

| This is not about replacing humans. It is about extending what humans can do — across distance, across hazard, across the limits of geography and physical presence. |

The progressive autonomy model is not a compromise position. It is a recognition of where we actually are. Autonomy is coming — it will be more capable, more affordable, and over time will handle more of what humans currently do remotely. But the transition is not instant, and the transition itself generates enormous value. Every pilot that runs, every digital twin that is built, every operator who trains: that is data. That is trust. That is the foundation that full autonomy will eventually stand on.

The question is not autonomy versus human control. The question is how we build the bridge responsibly, commercially, and at scale — in a way that captures value now and positions for the future. That is the ecosystem. That is the platform. That is the opportunity.

More to come in the next issue. We have not yet touched swarm coordination, underwater autonomy, or what the operator gig economy looks like at scale.

Conclusion: The Bridge Is the Destination

The robotics industry has spent decades chasing full autonomy as the promised land. And full autonomy will arrive — for bounded tasks, predictable environments, high-volume repetition. But the world is mostly unbounded, unpredictable, and low-volume. It is offshore rigs, not Amazon warehouses. It is elder care, not car manufacturing. It is bomb disposal, not corridor navigation.

In that world — the real world — the value is not in replacing humans. It is in extending them. Extending their judgment across distance. Their sensation across hazard. Their skills across geography and time zones.

The Progressive Autonomy Ecosystem is not a compromise. It is a recognition that the transition itself creates enormous value. Every pilot builds data. Every twin builds trust. Every trained operator builds the workforce that supervised autonomy will eventually depend on. The question is not whether autonomy wins. It is whether we build the bridge responsibly, commercially, and at scale — capturing value now while positioning for the future.

Next Steps: From Vision to Execution

Documents do not change industries. Deployment does. Here is what that looks like:

1. Beachhead Selection

Three initial verticals for controlled pilots:

- Defence and Hazardous Operations — demining, CBRN reconnaissance, EOD support.

- Industrial Inspection — offshore energy, confined spaces, high-value asset monitoring.

- Logistics Specialist Support — remote yard operations, exception handling, skilled labour pooling.

Each has clear ROI, established regulatory pathways, and partners already working in the space.

2. Partner Ecosystem Formation

Build a coalition of willing partners — haptics vendors, robot OEMs, telcos, cloud providers, digital twin platforms, and enterprise buyers who share the vision and want to co-develop integration standards, certification frameworks, and commercial models.

3. MVP Development

Specify the Minimum Viable Platform: the orchestration layer, device abstraction APIs, core AI copilot functions, and digital twin integration, targeting first deployments within 12 to 18 months.

4. Research Agenda

Commission work on the open questions:

- Haptic latency tolerance by task class

- Operator cognitive load and safe shift durations

- Digital twin transferability to physical environments

- Cultural acceptance of remote embodiment in care settings

- Explore 6gand 7g telecoms protocols for transmission redundancy

5. Regulatory Engagement

Initiate conversations with:

- FAA / EASA / CAA on BVLOS pathways

- EU AI Office on high-risk AI compliance

- National defence procurement agencies on safe teleoperation standards

- Care quality commissions on domestic deployment frameworks

Related Documentation

Supporting research, frameworks, and references. This section will be updated as the series develops.

| Category | Document / Resource | Status | Reference |

| Master Framework | Progressive Autonomy Ecosystem — Vision v2.0 | Published | Internal — see artefacts |

| Technical Reference | Assessment & Implementation Framework | Published | Internal — see artefacts |

| Market Analysis | Haptic Teleoperation — PESTLE / SWOT Deep Dive | Published | Internal — see artefacts |

| Use Case Library | Robot & Drone Formats and Use Cases | Published | Internal — see artefacts |

| Regulatory | BVLOS Drone Regulation — FAA / EASA / CAA | Pending | — |

| Research | Haptic fatigue and operator cognitive load studies | Pending | — |

| Competitive | Boston Dynamics / Agility / Apptronik landscape | Pending | — |

| Economic Model | Unit economics by sector and control mode | Pending | — |

| Standards | ROS 2, MAVLINK, IEC 61508 (SIL) references | Pending | — |

| Case Studies | Offshore inspection deployment benchmarks | Pending | — |

| Policy | AI liability frameworks — EU AI Act, UK AI White Paper | Pending | — |

| Human Factors | Operator ergonomics and shift design research | Pending | — |