A governance first, simulation validated blueprint for agents with identity, homeostasis, social reasoning, and long horizon planning.

Preamble

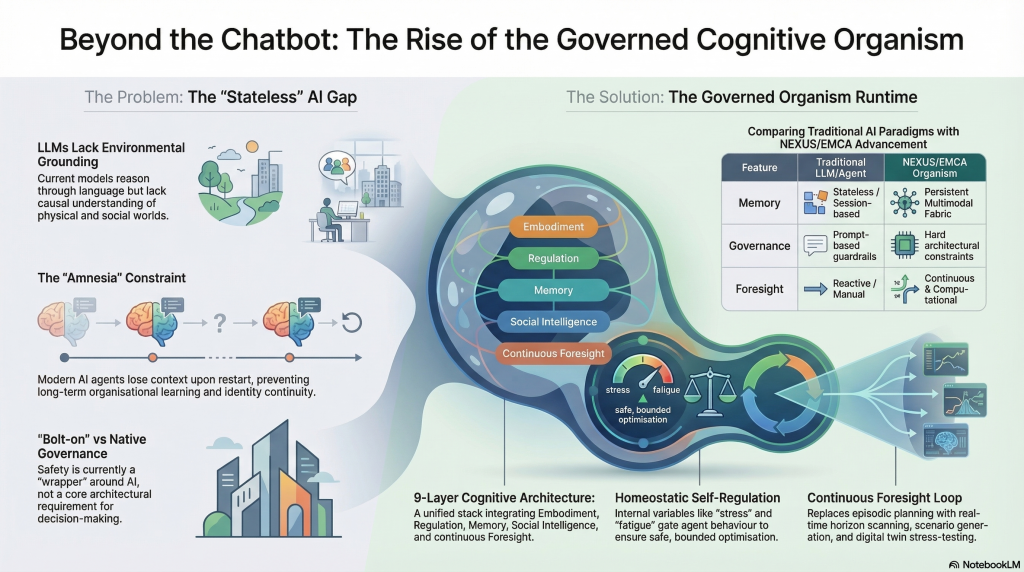

Most “agent” talk today assumes that intelligence equals language fluency plus tool use. That gets you impressive demos, but it breaks down when you need reliability over weeks, safety under pressure, and consistent behaviour across changing environments.

A better frame is to treat an advanced agent as a governed organism. It needs internal regulation, persistent memory, identity coherence, and decision provenance. It also needs a foresight capability that runs continuously, not as a one off report. That is the core promise of the unified architecture described here, a coherent stack that combines embodied cognition, homeostatic alignment, multi agent social reasoning, formal governance, and a foresight operating system, all deployed over distributed compute.

As usual some documents Artifacts

core architecture → extensions → governance → foresight → business strategy → implementation.

📘 Table: Attached Documents — Titles, Descriptions & Suggested Reading Order

| # | Document Title | Short Description | Suggested Reading Order |

| 1 | A Unified Architecture for Governed, Embodied, Foresight‑Driven Artificial Agents (EMCAFOS) | The foundational academic-style paper defining EMCA: layers, homeostasis, governance, foresight, operational semantics, evaluation. | 1 — Start here (core architecture) |

| 2 | Architecture Diagram Set | Visual diagrams (ASCII + SVG) showing the EMCA layers, data flows, governance pipeline, multi-agent topology, and ForesightOS. | 2 — Visual grounding |

| 3 | Concept Unified Architecture White Paper | A synthesis that merges EMCA with LLMs, foresight, distributed cognition, identity, and governance. Expands the architecture into a unified cognitive organism. | 3 — Conceptual expansion |

| 4 | Critical Analysis of EMCA: Case For and Against | A rigorous critique outlining strengths, weaknesses, feasibility issues, market risks, and scientific concerns. | 4 — Balanced perspective |

| 5 | EMCA + LLM + ForesightOS Unified Architecture White Paper | A full, structured white paper integrating EMCA with LLM interfaces and ForesightOS. More formal and complete than the concept paper. | 5 — Full integrated architecture |

| 6 | EMCA + LLM Interface — Stakeholder & Positioning | Market positioning, competitive quadrant, stakeholder needs, and product thesis for EMCA as an enterprise offering. | 6 — Market framing |

| 7 | EMCA + LLM Interface — New Use Cases | Detailed use cases unlocked by adding an LLM interface to EMCA: digital twin operator, crisis planner, autonomous orchestrator, etc. | 7 — Applied scenarios |

| 8 | Foresight Nexus Vision Document | Vision for a strategic intelligence ecosystem (NEXUS) focused on foresight, scenario planning, adaptive roadmapping, and organizational learning. | 8 — Foresight layer deep dive |

| 9 | NEXUS Gap Analysis | Identifies missing elements in the NEXUS proposal: ICP, unit economics, security architecture, evaluation standards, connectors, governance lifecycle. | 9 — Gaps & realism check |

| 10 | NEXUS Platform — Governed Intelligence Fabric | A full platform vision: memory, identity, social intelligence, legacy, governance, robotics, and multi-agent ecosystems. Very broad and ambitious. | 10 — Platform-level expansion |

| 11 | Regulation and Internal Variables | Deep dive into homeostasis: modeling internal variables, reward shaping, multi-objective alignment, IoT integration, noise filtering, time-scale control. | 11 — Technical deep dive (regulation) |

| 12 | Spec: Internal Regulation | Formal specification of the Regulation Layer: variables, equations, APIs, timescales, integration with IoT and governance. | 12 — Formal spec (regulation) |

| 13 | The Full Technical Specification | IEEE-style full technical spec of EMCA–LLM–FOS: modules, APIs, algorithms, operational semantics, safety, performance, deployment. | 13 — Full system spec |

| 14 | Unified Vision and Business Case Document | Strategic vision + business case for EMCA: markets, commercialization, risks, ROI, long-term ambition. | 14 — Business strategy |

| 15 | When is EMCA Worth It (and When Is It Overkill?) | Practical decision guide: where EMCA creates value, where simpler AI is enough, ROI considerations, domain-specific recommendations. | 15 — Practical adoption guide |

⭐ Recommended Reading Flow (High-Level)

Phase 1 — Understand the Architecture

- EMCAFOS (core architecture)

- Architecture Diagram Set

- Concept Unified Architecture White Paper

Phase 2 — Evaluate Strengths & Weaknesses

- Critical Analysis of EMCA

Phase 3 — Understand the Full Integrated System

- EMCA + LLM + ForesightOS Unified Architecture White Paper

Phase 4 — Market, Positioning, Use Cases

- Stakeholder & Positioning

- New Use Cases

Phase 5 — Foresight & Strategic Intelligence

- Foresight Nexus Vision

- NEXUS Gap Analysis

- NEXUS Platform

Phase 6 — Technical Deep Dives

- Regulation & Internal Variables

- Regulation Layer Spec

- Full Technical Specification

Phase 7 — Business & Adoption

- Unified Vision & Business Case

- When EMCA Is Worth It

Intoduction and Analysis

1. Why current paradigms fail in long horizon, high stakes settings

LLM centric agents

They reason and communicate well, but they lack grounding, stable internal state, and verifiable action selection. They can explain a decision after the fact, but they cannot guarantee that the decision followed policy.

RL centric agents

They can optimise behaviour in bounded environments, but they struggle with interpretability, governance, and long horizon identity continuity. They also tend to overfit reward proxies unless you design strong constraints.

Digital twins without native intelligence

They simulate well, but they do not choose actions under governance. They also do not learn and adapt as an organism across scenarios.

The unified architecture closes these gaps by treating language as an interface layer, not the brain.

2. The unified stack in plain terms

The architecture is modular and layered. Each layer has a clear contract, typed message passing, and replaceable implementations. The key layers are:

- LLM Interface Layer

Turns human intent into structured tasks. Produces explanations, plans, and interaction management. - Governance and Safety Layer

The non negotiable gatekeeper. Every candidate action is checked before execution. This is where “safety by architecture” lives. - Social and Multi Agent Reasoning Layer

Handles negotiation, norms, coordination, reputation, and group strategy. - Memory and Identity Layer

Stores episodic traces, semantic knowledge, and identity anchors. Tracks drift and narrative coherence. - Regulation Layer (Homeostasis)

Maintains internal variables like cognitive budget, fatigue, stress, environmental risk, and compliance margin. Uses them as first class state, not as logs. - Cognition Layer

Plans, selects policies, manages goals, and integrates foresight outputs into decision making. - Embodiment Layer

Connects to sensors, actuators, IoT streams, and digital twin state. It is the grounding bridge. - Foresight Operating System (ForesightOS)

Runs horizon scanning, scenario generation, stress testing, and extrapolation. Produces a distribution of plausible futures, not a single forecast. - Distributed Compute and AI Native OS

Executes components across devices and environments. Manages isolation, orchestration, and intent aware scheduling.

If you want a single sentence: the agent perceives and acts like an embodied system, regulates itself like an organism, plans like a strategist, anticipates like a foresight team, and behaves like a governed institution.

3. The formal core, what is “state” in this system

A key move is formalising the agent as a stateful system with explicit variables:

- Environment state

- Internal homeostatic vector

- Memory stores

- World model

- Policy

- Compliance and governance state

That matters because it forces design discipline. You can test each variable, measure drift, and audit how changes in internal state affect action selection.

4. Homeostatic alignment, bounded objectives instead of brittle goals

Instead of optimising only task reward, the architecture introduces a penalty for internal instability. You can treat stress, uncertainty, fatigue, environmental risk, and compliance margin as variables with set points. When the system deviates, it pays a cost.

This is a practical answer to a common failure mode. Agents chase short term wins that create long term risk. Homeostatic penalties make the agent value stability, compliance headroom, and safe pacing.

In deployment terms, this gives you behaviours like:

- slowing down under uncertainty

- deferring risky actions when compliance margin shrinks

- escalating to humans earlier when internal stress rises

- preferring robust plans that survive multiple futures

5. Governance first design, the Action Verifier Kernel idea

A credible autonomy stack needs a hard boundary between “proposed” and “allowed.”

This architecture implements that boundary as an action verifier kernel that checks candidate actions against:

- policy rules

- risk thresholds

- compliance margins

- consistency checks across modalities and world model assumptions

That creates auditability by design. You do not just log what happened. You can show why an action passed verification, and which constraint would have blocked it.

6. ForesightOS, turning foresight into a continuous machine process

Most organisations run foresight as workshops and reports. This architecture operationalises it as a continuous runtime.

ForesightOS does five things repeatedly:

- scans signals

- clusters trends

- generates scenarios

- stress tests plans

- extrapolates trajectories

It outputs a distribution over plausible futures. The cognition layer uses that distribution to plan robust strategies, not fragile best case plans.

This is how you get anticipatory intelligence that can answer, “What could break next,” and “Which option stays safe across futures.”

7. Operational semantics, how the loop actually runs

Each timestep follows a disciplined loop:

- Observe environment

- Update world model

- Update internal regulation state

- Generate candidate plans

- Verify plans via governance

- Execute only safe actions

- Log decisions with provenance

- Update memory and identity

- Run foresight processes asynchronously

This is important because it makes the system testable. You can simulate it, inject failures, and measure compliance.

8. Evaluation, what “good” looks like

The architecture proposes measurable metrics across five dimensions:

- Homeostatic stability: internal variables remain within bounds over time

- Foresight accuracy: predicted trajectories match realised outcomes over rollouts

- Governance compliance: percentage of actions that pass checks, plus quality of blocks and escalations

- Identity coherence: controlled drift, stable self model, consistent narrative decisions

- Multi agent coordination: welfare, fairness, and coordination outcomes in group tasks

This is a strong shift from leaderboard thinking. You stop grading only output quality. You grade stability, safety, and long run coherence.

9. Where this becomes real, not just theory

The proposed experiment settings map directly to high value applications:

- Industrial operations digital twins: planning maintenance, preventing cascading failures

- Hospital patient flow simulation: staffing, resource allocation, surge planning

- Energy grid scenarios: stability, resilience, contingency playbooks

- Crisis response simulations: evacuation strategies, logistics, coordination across agencies

- Multi agent negotiation environments: procurement, scheduling, conflict resolution

The common need is the same. Safe autonomy under uncertainty, with auditable decisions.

Conclusion

A unified cognitive foresight architecture is not about adding more tools to an LLM. It is about designing an organism like agent that can operate continuously, regulate itself, remember responsibly, coordinate socially, anticipate futures, and stay inside hard governance constraints.

This stack matters because it turns the usual tradeoff on its head. You do not need to choose between capability and safety. You can build capability through governance, by making verification, regulation, memory, and foresight part of the core runtime.

If you want agents that run in real systems, not just demos, this is the direction that makes engineering sense.

Next steps

- Define the minimum viable governed agent

Implement the action verification kernel plus a basic memory and identity layer. Keep the LLM layer as a thin interface. - Build the regulation vector early

Choose 5 to 8 internal variables with set points. Start measuring them from day one, even if the control policies are simple. - Start in a digital twin, not production

Use simulation first validation. Build stress scenario libraries and long horizon rollouts. - Create governance artifacts that compile

Turn policies into typed rules and testable constraints. Add provenance logging as a non optional requirement. - Add ForesightOS as a service loop

Begin with scenario generation and stress testing for a single domain. Expand to horizon scanning and trend clustering once you have stable evaluation. - Publish a benchmark pack

A good next move is an open evaluation suite that tests safety, foresight, homeostatic stability, and identity coherence in shared environments.