Preamble

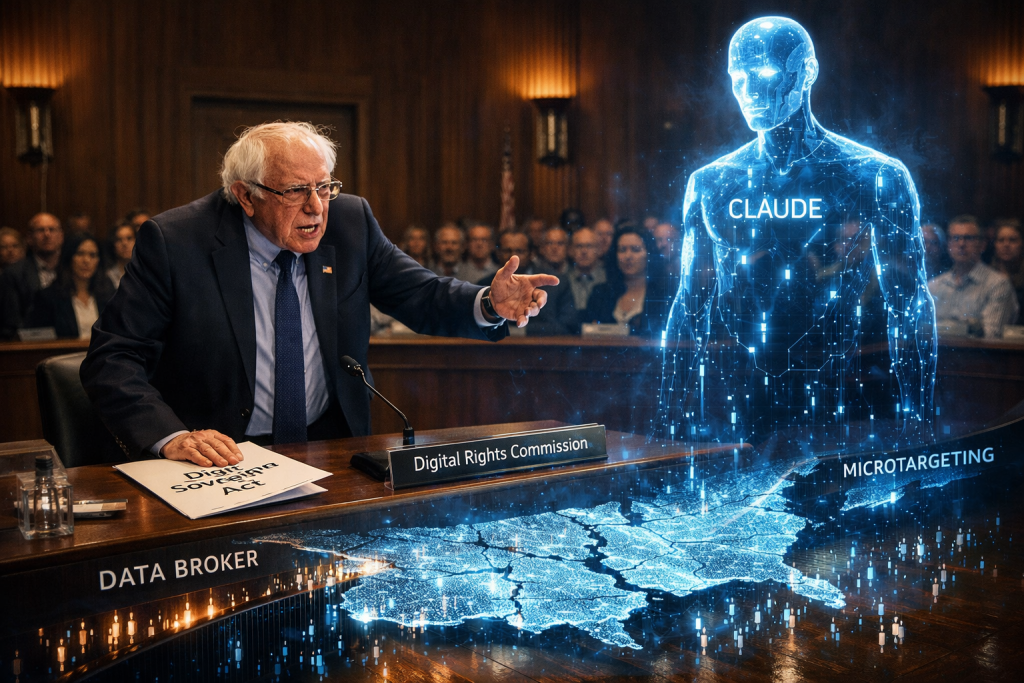

I watched Bernie vs. Claude – YouTube and my takeaway was Artificial intelligence is no longer a speculative technology at the margins of public life. It is rapidly becoming part of the infrastructure through which information is filtered, identities are inferred, choices are shaped, and power is exercised. Yet while AI systems promise efficiency, scale, and innovation, they also intensify longstanding questions about privacy, accountability, democratic legitimacy, and the concentration of technological power. These questions are no longer abstract. They are immediate, structural, and deeply political.

This white paper, Bernie vs Claude: A White Paper on AI-Driven Data Extraction, Democratic Risk, and the Case for a Digital Sovereignty Act, enters that debate from a clear premise: data extraction is not merely a commercial practice, but a governance issue with profound implications for civil rights, public trust, and democratic resilience. Using the widely resonant Sanders–Claude dialogue as a narrative point of departure, the paper examines how invisible systems of data collection, profiling, algorithmic targeting, and AI training are reshaping the relationship between citizens, markets, and the state.

At its core, this document argues that the current model of digital power is unsustainable. Individuals generate the behavioural, social, and expressive data that fuel contemporary AI systems, yet they remain largely excluded from meaningful control over how that data is collected, interpreted, monetised, and weaponised. The result is a widening asymmetry: platforms and data brokers gain unprecedented visibility into the lives of citizens, while citizens themselves operate in conditions of opacity, fragmented consent, and limited recourse.

The purpose of this paper is therefore twofold. First, it clarifies the scale and seriousness of the AI privacy crisis, especially where it intersects with political manipulation, democratic fragmentation, and regulatory capture. Second, it advances the Digital Sovereignty Act framework as a serious legislative and institutional response one designed to translate digital rights from aspirational language into enforceable public infrastructure.

This is not an argument against innovation, nor a rejection of AI as such. It is an argument that technological advancement without democratic safeguards will deepen exploitation, weaken public institutions, and normalise forms of surveillance that free societies should reject. If AI is to remain compatible with liberty, dignity, and self-government, then the rules governing data, consent, profiling, and algorithmic power must be redesigned in the public interest. That is the task this white paper takes up.

As usual some references: Analysis

| Name | Short description |

| Bernie vs Claude Global Legislator & Policy Architect framework | Profile and capability framework for a globally oriented legislator/policy architect with strengths in digital rights, AI governance, software fluency, interoperability, copyright, and cross-border regulation. Needed to advise on policy and legislation |

| Bernie vs Claude The human in loop and governance and ethics | Governance framework for human-in-the-loop oversight, ethics, security, policy conformance, and change control for the Digital Governance & Enforcement Suite (DGE). |

| Bernie vs Claude White paper v2 | White paper on AI-driven data extraction, democratic risk, and the case for a Digital Sovereignty Act, using the Sanders–Claude dialogue as the narrative anchor. |

| Bernie vs Claude Business and system requirements (BRD SRS) | Business requirements and system architecture specification for the Digital Governance & Enforcement Suite, covering modules, AI services, compliance workflows, KPIs, budget, and phased delivery. |

A White Paper on AI-Driven Data Extraction, Democratic Risk, and the Case for a Digital Sovereignty Act

Prepared by: Steve Adenaike | March 2026

Conceptual Foundation:

- The Case for Digital Sovereignty: Why Your Online Life Should Be Portable and Owned by You: Digital Sovereignty Act Framework, Identity Integrity Manifesto,

- Identity Integrity in the Age of Deepfakes, Why Your Face, Voice, and Likeness Need Legal Protection: Deepfake Governance Analysis

- Web Research

America is living through a silent data emergency. As Senator Bernie Sanders pressed AI assistant Claude in a widely circulated 2026 dialogue, the mechanics of how AI systems harvest, profile, and monetise individual data are largely invisible to the public yet their consequences are profound, reaching from consumer manipulation to democratic destabilisation. This white paper synthesises the core issues raised in that exchange with the comprehensive policy architecture of the Digital Sovereignty Act framework, arguing that the United States urgently needs federal legislation with enforceable teeth: explicit consent mandates, algorithmic transparency requirements, meaningful deletion rights, and structural remedies for the political capture of the regulatory process itself.[1][2]

I. The Problem: What Americans Don’t Know Is Hurting Them

Senator Sanders asked a deceptively simple question: what would shock the American people about how their data is collected? The answer, as Claude acknowledged, is the sheer scale and invisibility of the surveillance infrastructure. Companies collect browsing history, location data, purchase records, search queries, dwell-time on individual web pages, and social graph connections then feed all of it into AI systems that construct detailed psychographic profiles without users ever meaningfully consenting.[1][3]

The Digital Sovereignty Act framework identifies this as a fundamental market failure: “users generate data that platforms monetise in multiple ways direct advertising revenue, algorithm training data, third-party data sales yet users receive virtually none of this value”. Social media companies earn an estimated $50–$200 per user per year from this extraction, while the individuals whose behaviour powers those models remain uncompensated and largely uninformed.[3][1]

The consent architecture currently governing data collection is structurally broken. Terms of service agreements routinely exceed the length of a novel, written in legal language designed to obscure rather than inform. As Claude explained to Sanders, “most people click agree on terms of service without reading them” and have no idea their data is being combined with thousands of other data points to build AI profiles used to decide what ads they see, what prices they are charged, and what information is prioritised in their feeds.[1][3]

This is not a user education problem. It is a power asymmetry problem. The Digital Sovereignty Act framework proposes AI-powered Terms of Service analysis as a public utility tools that automatically scan platform agreements against statutory rights, flag dark patterns designed to manipulate consent, and deliver plain-language summaries. Consent cannot be meaningful when the information required to give it is deliberately inaccessible.[4][2]

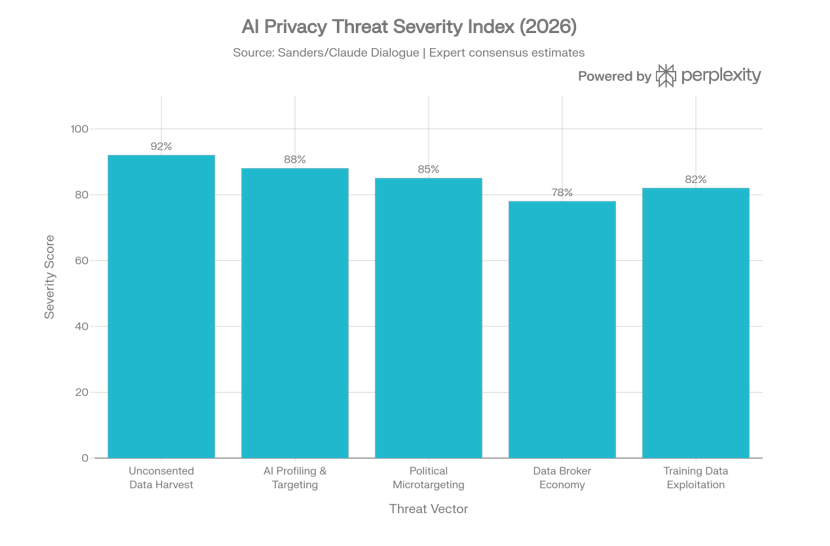

II. Five Threat Vectors: Anatomy of the AI Privacy Crisis

The Sanders–Claude dialogue touched on five distinct, but interconnected harms produced by the current data ecosystem. Each is examined below

The most foundational problem is data collection without genuine informed consent. Platforms operate on an opt-out model in which the default is total data sharing, and opting out requires navigating deliberately obscure menus. The Digital Sovereignty Act framework proposes a structural reversal: explicit opt-in consent as the legal default, with non-waivable rights that cannot be buried in terms of service. Any clause purporting to waive Tier One data rights covering all user-generated content would be void and unenforceable under the proposed framework.[4][1][2]

2. AI Profiling and Behavioural Targeting

Once collected, raw data is processed by AI into behavioural profiles of extraordinary granularity. These profiles are used to predict purchase decisions, price-discriminate between consumers, and engineer engagement through algorithmically curated feeds. The framework addresses this through mandatory Algorithmic Accountability provisions: users must receive explanations for why specific content was recommended, be able to access and correct profiling data used about them, and have the right to opt out to a non-personalised, chronological feed.[4][1][2]

3. Political Microtargeting and Democratic Fragmentation

The most alarming dimension of the Sanders exchange was the political threat. Claude described how AI profiling enables campaigns to identify voters based on specific psychological vulnerabilities financial anxiety, institutional distrust, social isolation and serve precisely crafted messages designed to exploit those vulnerabilities. Unlike broadcast political messaging, AI microtargeting allows completely different narratives to reach different voter segments simultaneously, “fragmenting shared reality” in ways that undermine the foundations of democratic deliberation. The Digital Sovereignty framework’s Age of Fragmented Selves analysis identifies this identity fragmentation as a civilisational risk, not merely a consumer harm.[1][5]

A shadow economy of data brokers buys and sells personal information about millions of Americans without their knowledge or consent. This market operates with almost no federal regulation, allowing the re-packaging and sale of profiles assembled from dozens of disparate sources. The proposed framework addresses this through mandatory third-party disclosure logs visible to each user in real time, and a requirement for platforms to notify third parties when a deletion request is received. Platforms must also prohibit the retention of “ghost profiles” or anonymised derivatives without explicit consent.[4][1][2]

5. AI Training Data Exploitation

As Sanders specifically raised, AI companies use personal conversations and interactions to train successive model generations creating a core contradiction: companies claim to protect privacy while simultaneously monetising personal data to build more profitable products. The framework requires platforms to clearly document whether user data is used for AI training and provide opt-out mechanisms that are genuinely accessible, not hidden in tertiary settings menus.[4][1]

III. The Political Economy Problem

Why Voluntary Reform Will Not Work

Senator Sanders made a critical intervention that the white paper must confront directly: he rejected Claude’s initial suggestion that targeted regulations could be the solution, pointing out that AI companies are “pouring hundreds of millions of dollars into the political process to make sure that the safeguards… actually do not take place”. This is not a peripheral concern — it is the central structural obstacle to reform.[1]

The regulatory capture problem means that the conventional legislative pathway propose sensible regulations, await industry compliance will not produce timely or meaningful protection. The Strategic Response to Pushback document in this framework’s companion materials identifies the precise industry counter-arguments: that interoperability threatens security, that compliance costs destroy innovation, that users don’t actually want control. These arguments are coordinated, well-funded, and strategically timed to stall legislation during windows when reform momentum exists.[6]

Sanders pressed Claude on whether a moratorium on new AI data centres might be the pragmatic lever needed to force regulatory action. Claude ultimately conceded the point: when companies are spending hundreds of millions to block regulation, “a moratorium on new data centres is actually a pragmatic response to that problem it forces a pause that gives lawmakers actual leverage to demand real protections”.[1]

This white paper takes a calibrated position: a full moratorium on AI data centre construction is a negotiating instrument, not an end-state policy goal. Its value lies in creating irreversible political pressure for a legislative package rather than in its direct effects. The threat of capacity constraints on AI expansion is one of the few countervailing forces that can match the lobbying power of the technology industry. Legislators should be prepared to table this instrument credibly, even if the ultimate resolution is a negotiated regulatory framework rather than a construction freeze.[6]

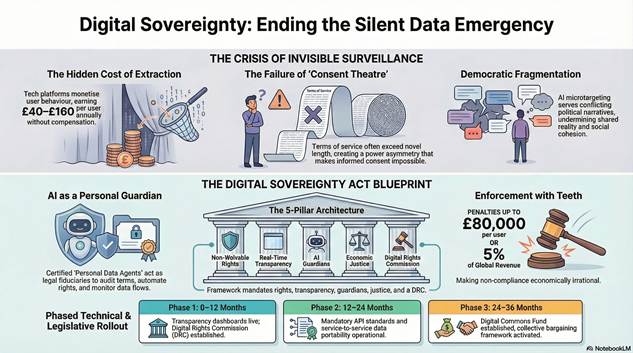

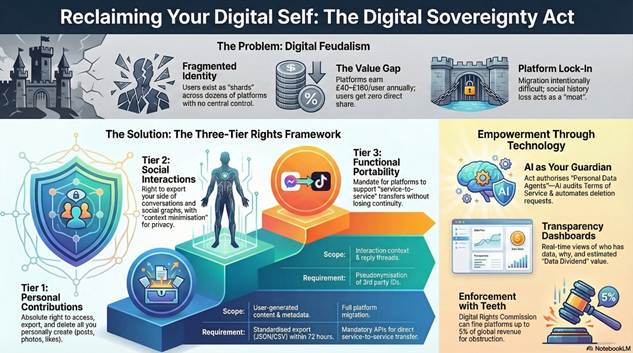

IV. The Policy Framework: What Meaningful Reform Looks Like

The Digital Sovereignty Act framework provides a detailed blueprint directly responsive to every concern Sanders raised. Its core architecture rests on five pillars.[2]

Pillar 1 — Non-Waivable Data Rights

A three-tier rights structure establishes irrevocable individual rights regardless of what terms of service say:[2]

- Tier One (Personal Archive): Complete access, frictionless export in machine-readable formats within 72 hours, true deletion with cryptographic proof, and unrestricted right of reuse for personal purposes

- Tier Two (Social Context): Portable interaction records with appropriate privacy protections for other parties, including anonymised social graph exports using persistent tokens

- Tier Three (Functional Portability): The right to transfer data directly to competing platforms, carry social connections, and import data into any covered platform[4]

Pillar 2 — Transparency as Infrastructure

Every covered platform must provide each user with a real-time dashboard displaying all collected data, a log of every third party with whom it has been shared, plain-language explanations of how data is used for ranking and AI training, and an estimated annual Data Dividend value. Algorithmic transparency reports must be published and subject to independent third-party audits for bias, discrimination, and manipulation risks.[4][2]

Reversing the model in which AI serves platforms at users’ expense, the framework proposes certified Personal Data Agents AI tools that operate on behalf of users to audit terms of service, automate rights management, monitor data flows, and alert users to anomalies. Platforms must cooperate with certified agents and are prohibited from blocking, throttling, or discriminating against their traffic. This directly addresses Sanders’ concern about the trust deficit between users and AI companies: rather than trusting companies, users would deploy their own AI guardian operating under a legal duty of fidelity.[7][1][2]

The framework recognises that users who generate platform value deserve a share of it. Mechanisms include a mandatory Digital Commons Fund a 2% contribution from covered platform revenue to finance public digital infrastructure, privacy-enhancing technology research, and digital literacy programs alongside rights to collective bargaining through Digital User Associations. These provisions directly address the commodification of personal data that Sanders identified as the root of the problem.[1][3][2]

Pillar 5 — Enforcement With Teeth

The most important departure from existing legislation is penalty structure. Civil penalties of up to the greater of $100,000 per affected user or 5% of global annual revenue are proposed for violations of user rights a scale that makes non-compliance economically irrational for even the largest platforms. Criminal penalties including imprisonment are available for willful, systemic obstruction, and a private right of action gives individual users direct standing to sue.[2]

V. The Regulatory Architecture

Enforcement under the framework is vested in a dedicated, technically expert Digital Rights Commission (DRC) with powers to investigate complaints, conduct audits, issue fines, develop technical standards, certify Personal Data Agents, and publish annual state-of-digital-rights reports. The DRC model is explicitly designed to avoid the fragmented sectoral approach that has made US privacy enforcement chronically ineffective HIPAA for health data, FERPA for education, CCPA in California only with a unified federal body empowered to act across all covered platforms.[1][2]

The phased implementation schedule proposed in the framework provides a realistic pathway:[2]

| Phase | Timeline | Key Milestones |

| Phase 1 | 0–12 months | Tier One export rights, transparency dashboards enforceable; DRC established; Personal Data Agent certification begins |

| Phase 2 | 12–24 months | Tier Two/Three portability; mandatory API standards; algorithmic transparency reporting |

| Phase 3 | 24–36 months | Digital Commons Fund contributions; collective bargaining framework; international coordination |

The Technical Implementation Platform

The companion Digital Sovereignty Implementation Platform (DSIP) specification provides the software architecture that makes these rights operationally real. The DSIP translates statutory rights into auditable workflows, standardised data packages, and interoperable APIs ensuring that rights on paper translate to rights in practice. Core components include a Consent and Policy Gate, standardised export generation within statutory timelines, cryptographic deletion proof, and immutable audit logs accessible to regulators.[7]

VI. Addressing the Core Democratic Threat

The fragmentation of political reality through AI microtargeting is not merely an electoral concern it is a structural threat to the conditions that make democratic self-governance possible. When different voter segments receive completely different information environments optimised for their psychological vulnerabilities, the shared epistemic commons required for collective deliberation dissolves. The Age of Fragmented Selves framework identifies this as a civilisational-level risk requiring intervention at the infrastructure layer, not merely at the level of content moderation.[1][5]

Political Advertising Transparency

Existing campaign finance disclosure requirements were designed for broadcast media and are structurally inadequate for AI-enabled micro-targeted advertising. The Digital Sovereignty Act framework’s algorithmic accountability provisions combined with mandatory third-party disclosure logs would make political profiling operations visible for the first time, creating a basis for regulatory intervention. Foreign-government access to detailed citizen profiles, which Claude identified as a particularly serious threat, would be addressed through the data broker provisions requiring disclosure and consent for all third-party data sales.[1][2][8]

VII. Responding to Industry Pushback

The framework anticipates four categories of industry resistance, each with a specific rebuttal:[6]

| Industry Claim | Policy Response |

| “Interoperability threatens security and privacy” | Open standards enable security research; closed systems hide vulnerabilities. Authenticated APIs can be more secure than current practices [2] |

| “Compliance costs destroy innovation” | Tiered requirements based on platform size; open-source compliance tools provided; only profitable large platforms face full requirements [3] |

| “Users don’t actually want this” | Users want control; complexity is a design challenge platforms must solve, not a reason to deny rights [6] |

| “We already comply via GDPR/CCPA” | Current export features are minimal, incomplete, and deliberately difficult to use compliance theatre, not genuine empowerment [3] |

The key strategic insight from Sanders’ exchange is that industry lobbying power is greatest before legislation acquires political momentum. The moratorium threat, combined with citizen mobilisation and coalition-building with civil liberties organisations, labour unions, and small business groups harmed by platform data monopolies, creates the counter-pressure necessary to move legislation past the industry capture barrier.[6]

Based on the Sanders–Claude dialogue and the Digital Sovereignty Act framework, this white paper makes eight concrete recommendations:

- Pass a federal Digital Sovereignty Act with the non-waivable three-tier rights structure, explicit opt-in consent default, and penalty structure described above[2]

- Establish the Digital Rights Commission as an independent, technically expert federal body with genuine enforcement powers[2]

- Mandate real-time transparency dashboards on all covered platforms showing users what data is held, who has accessed it, and how it is used for algorithmic curation and AI training[4]

- Create a public AI Guardian infrastructure — certifying Personal Data Agents and funding their development as public utilities to level the information asymmetry between users and platforms[7]

- Regulate the data broker industry directly with mandatory disclosure, consent requirements, and a user right to see and contest all profiles held about them[1]

- Establish political microtargeting disclosure rules requiring real-time public logs of all AI-targeted political advertising with profile criteria disclosed[8]

- Authorise the DRC to impose a data centre construction moratorium as a conditional enforcement remedy when platforms systemically obstruct compliance — preserving it as a credible legislative lever[6]

- Ratify international coordination frameworks to prevent regulatory arbitrage and address the threat of foreign-government access to citizen profile data[1]

Senator Sanders’ exchange with Claude distilled the AI privacy crisis to its essential moral core: your attention, your behaviour, your choices all of that has become a commodity to be bought and sold. The Digital Sovereignty Act framework, developed in the attached conceptual corpus, provides a technically rigorous and politically actionable response to every dimension of that crisis from unconsented data harvest to democratic fragmentation to the structural problem of regulatory capture.[1][2]

Privacy, as Claude ultimately told Sanders, is not merely a personal issue. It is a democracy issue. When corporations and governments hold detailed AI-constructed profiles of millions of citizens, they possess a form of power over those citizens that fundamentally distorts the conditions for free choice and democratic self-governance. The framework proposed here is not an anti-technology agenda. It is a pro-democracy agenda one that insists technology must serve human flourishing rather than extract from it.[5][2][1]

The window for meaningful action is narrowing as AI capabilities compound and data accumulation deepens. The time to act is now.[3]

This white paper draws conceptually from the Digital Sovereignty Act Policy Framework (February 2026), the DSIP Business Requirements and System Requirements Specification, the Digital Sovereignty Act Draft Legislation, the Age of Fragmented Selves, the Public Manifesto for Digital Sovereignty, the Identity Integrity Manifesto, the Strategic Implementation Plan, the Strategic Response to Pushback, and the Deepfake Governance Act Policy Paper.

References

- 01_Policy_Paper_Digital_Sovereignty_Act.docx

- THE-DIGITAL-SOVEREIGNTY-ACT-7.docx

- 03_Public_Manifesto_Digital_Sovereignty-3.docx

- 02_Draft_Legislation_Digital_Sovereignty_Act-2.docx

- The-Age-of-Fragmented-Selves-6.docx

- Strategic-response-to-push-back-11.docx

- BRD-and-SRS-Digital-Sovereignty-Implementation-Platform-DSIP-4.docx

- Deepfake_Governance_Act_Policy_Paper-8.docx

- The-Identity-Integrity-Manifesto-2-12.docx

- Strategic-Implementation-Plan-10.docx

- Deepfake_Stakeholder_Impact_Analysis-9.docx

- The-Identity-Integrity-Manifesto-13.docx

- Policy-Paper-5.docx