Preamble

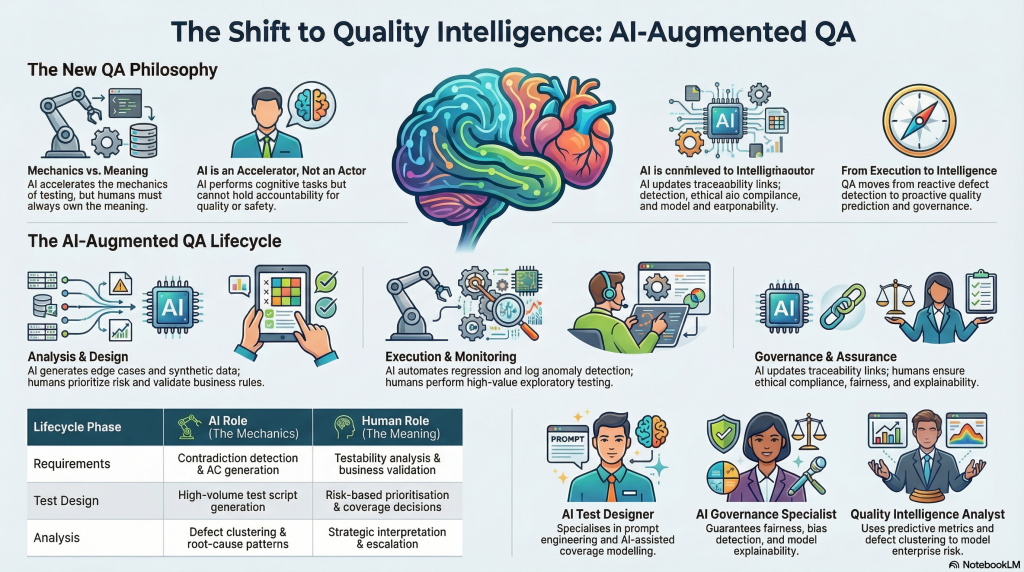

How artificial intelligence is rewriting the roles, responsibilities, and identity of software quality assurance and what you need to do about it.There’s a moment in every professional discipline’s history when the tools of the trade change so fundamentally that the discipline itself has to change with them. Accountants faced it with spreadsheets. Designers faced it with desktop publishing. Business analysts are living through it right now with generative AI. Quality assurance is next. And unlike some professions that AI will nudge gently toward evolution, QA is being hit from multiple directions simultaneously. AI is automating the mechanical work that has defined the role for decades. It’s introducing entirely new failure modes that QA must now be responsible for catching. And it’s expanding the scope of “quality” into territory model fairness, explainability, data lineage, drift that most QA teams aren’t yet equipped to navigate. This isn’t a threat to the profession. It’s an upgrade opportunity. But only if we take it seriously.

As usual my references: QA-AI

| Name | Short description |

| QA AI outline handbook.docx | A structured handbook for AI-augmented QA covering lifecycle, roles, governance, traceability, AI-specific testing, metrics, operating model, skills, and public-sector requirements. |

| Quality Assurance in the Age of AI perspectives.docx | A multi-version document presenting the same core QA-and-AI theme for different audiences: executive, technical, training, and thought-leadership use. |

| Quality Assurance and Testing in the Age of AI.docx | A comprehensive long-form paper on how AI changes QA roles, governance, risks, SDLC practices, operating models, and future capabilities. |

| QA & Testing Personas for the QA Testing Life Cycle.docx | A persona-based guide to the modern QA lifecycle, outlining nine specialist roles, their strengths, lifecycle responsibilities, AI-augmented capabilities, managed risks, tool mapping, and RACI coverage. |

The Mechanics Are Being Automated. That’s Not Bad News.

Let’s start with what’s actually happening. AI can now do or credibly assist with most of what used to consume the bulk of a QA engineer’s time:

Generating test cases from requirements. Creating synthetic test data. Running regression suites. Clustering defects by root cause. Detecting anomalies in logs. Maintaining traceability matrices. Drafting documentation.

If your job description currently reads like that list, it’s worth pausing to think about what your job description should look like in three years.

But here’s the thing: automating the mechanics of a discipline doesn’t destroy the discipline. It elevates it. When compilers took over the job of manually tracking memory addresses, software engineers didn’t disappear they started writing better software. When Excel replaced paper ledgers, accountants didn’t vanish they started doing more interesting analysis.

The same principle applies to QA. AI accelerates the mechanics. It doesn’t own the meaning.

What AI Cannot Do And Why That Matters

The capabilities gap in AI-assisted testing is not primarily technical. It’s cognitive and contextual.

AI cannot determine what matters to the business. It cannot resolve a conflict between two stakeholders who disagree on what “correct” actually means. It cannot perform the kind of exploratory testing that requires genuine curiosity the tester who instinctively clicks the thing no one told them to click and finds the edge case the entire team missed.

More importantly, AI cannot be held accountable. This is not a limitation that will be engineered away. It is a structural fact about how AI systems exist in the world. When a system causes harm when a benefits decision is wrong, when a financial transaction fails, when patient data is compromised a human being has to be responsible. That accountability has to live somewhere. In QA, it lives with the professionals who sign off on quality.

There’s also a subtler problem: AI produces output that looks confident even when it’s wrong. A hallucinated test case reads like a real test case. A plausible-sounding acceptance criterion that subtly misrepresents the business rule will pass a surface-level review. AI can generate large volumes of tests that achieve impressive coverage metrics while completely missing the thing that matters.

Coverage is not quality. This is one of the oldest lessons in testing, and AI makes it more relevant, not less.

Non-Determinism Changes Everything

Traditional QA was built on a deterministic assumption: same inputs, same outputs, same behaviour. If the test passed yesterday, it will pass today. If the system was verified, it stays verified.

AI breaks this assumption at every level.

The AI tools QA teams use to generate tests can produce different outputs for the same prompt on different days. The AI systems QA teams are now being asked to test the recommendation engines, the decision-support tools, the automated classifiers produce outputs that vary, drift over time, and may behave differently across demographic groups in ways that are invisible to standard functional testing.

This creates new categories of risk that QA must now own:

Model drift. An AI system that was accurate and fair at deployment may become less so over time as the underlying data distribution shifts. Testing the system at release is no longer sufficient. Continuous monitoring becomes part of the QA function.

Fairness and bias. If an AI system makes different decisions for different groups of people approving loans, screening applications, triaging support requests and those differences aren’t justified by legitimate business logic, that’s a quality failure. It may also be a regulatory failure. QA needs to test for this.

Explainability. In regulated industries and public-sector contexts, it’s not enough that an AI system produces correct outputs. You need to be able to explain why. QA must now validate not just that the system behaves correctly, but that its behaviour can be articulated.

Data lineage. The quality of an AI system’s outputs depends heavily on the quality and provenance of its training data. QA needs to trace that lineage and validate it.

None of this is science fiction. These are real requirements that are being written into regulation, procurement frameworks, and organisational governance standards right now.

The Governance Problem Nobody Is Talking About

Here is the governance failure that’s quietly accumulating across development teams who’ve adopted AI tools enthusiastically but haven’t updated their process to match:

When AI generates a test case, who is responsible for it? When an AI tool updates a traceability matrix, how do you know what changed and why? When a prompt produces an acceptance criterion that gets committed to a backlog, is that criterion governed by the same change control process as a human-written one?

In most teams right now, the answer to all three questions is: nobody’s really thought about it.

This is the kind of technical debt that feels invisible until it isn’t. The artefact debt. The explainability debt. The traceability debt. The prompt debt the accumulation of AI-assisted outputs whose provenance is undocumented and whose rationale can no longer be reconstructed.

The governance principles that address this aren’t complicated, but they require deliberate implementation:

Every AI-generated test artefact should be tagged with its provenance human-authored, AI-assisted, or AI-generated. Prompts should be logged. Traceability must link requirements to tests to defects to fixes, and AI must write into that chain rather than bypassing it. AI-generated changes must go through the same review and approval processes as human-generated ones. No AI system should be approving its own outputs.

These aren’t bureaucratic constraints. They’re the conditions under which AI-assisted QA can be trusted.

The New Shape of QA Work

If we accept that AI is handling more of the mechanical layer, what does the elevated QA function actually look like?

The honest answer is that it looks more like the work QA has always claimed to aspire to but rarely had time for.

Risk intelligence. Understanding which parts of a system carry the highest business risk and ensuring testing effort is concentrated there, not just distributed evenly across the feature backlog.

Requirements partnership. Working closely with BAs and product owners to ensure that acceptance criteria are testable, complete, and unambiguous before development starts not discovering gaps during UAT.

Exploratory depth. The creative, curiosity-driven investigation that finds the failures no automated suite would think to look for.

Stakeholder translation. Taking complex quality signals defect clusters, coverage gaps, test failures and translating them into language that enables good business decisions.

AI assurance. The entirely new domain of validating AI systems for fairness, accuracy, drift, and explainability.

Governance design. Building and maintaining the traceability, version control, and change management frameworks that make AI-assisted delivery trustworthy.

This is a more cognitively demanding job than writing regression scripts. It’s also a more valuable one.

A New Career Topology

The QA profession is not going to consolidate into a single “AI-enabled QA engineer” role. It’s going to differentiate. The people who are good at test design and AI-assisted automation will diverge from the people who are good at governance and assurance. Subject-matter experts in regulated domains will develop in different directions from the generalists who work across platforms.

The broad shape of where QA careers are heading looks something like this:

There’s a delivery track — test designers who work fluidly with AI tools, automation engineers who build and maintain intelligent regression suites, UAT leads who orchestrate acceptance processes with business stakeholders.

There’s an insight track — quality intelligence analysts who work with defect data and predictive models, root-cause specialists who understand systems deeply enough to diagnose failure patterns rather than just report them.

There’s a governance track — AI assurance specialists who own explainability, fairness, and compliance testing; traceability leads who maintain the audit trail that regulators and auditors will eventually ask to see.

There’s an architecture track — senior practitioners who define enterprise quality standards, design the frameworks within which delivery teams operate, and sit at the table where decisions about risk and architecture get made.

The entry point to the profession will still be grounded in test execution and design fundamentals. But the trajectory is upward and outward toward strategy, governance, and the kind of cross-functional influence that good QA work has always enabled but rarely been credited for.

What Teams Need to Do Now

The organisations that will navigate this transition well are not the ones waiting for the technology to stabilise before adapting their processes. They’re the ones building the governance infrastructure now, while the habits and expectations around AI-assisted work are still being formed.

Concretely, that means a few things.

Establish provenance tagging for AI-generated artefacts. Not as a bureaucratic exercise, but as a discipline that makes AI-assisted work auditable and trustworthy.

Build exploratory testing capacity deliberately. As AI handles more of the scripted regression work, the skills that distinguish elite testers domain knowledge, curiosity, adversarial thinking become more valuable, not less. Those skills need investment.

Expand the definition of quality to include AI-specific attributes. Fairness, explainability, drift resistance, and data lineage are quality concerns. They need to be on the test plan, not treated as someone else’s problem.

Invest in QA’s governance role at the programme level. The teams that will deliver trustworthy AI-assisted products are the ones where QA has a seat at the architecture table, not just the defect triage meeting.

The Future of QA Is Quality Intelligence

There’s a framing that captures the direction of travel well: QA is evolving from test execution to quality intelligence.

The test executor verifies that the thing that was built matches the specification. The quality intelligence function does that too, but it also asks whether the specification captured the right requirements, whether the system will behave safely and fairly at scale, whether the delivery process is generating the kind of debt that will cause problems later, and whether the organisation has the governance infrastructure to remain accountable as AI participates more deeply in delivery.

That’s a more ambitious and more important function. It’s also more resilient because the value it creates doesn’t compress away when AI gets better at writing test scripts.

The version of QA that AI will eventually replace is the version defined entirely by manual execution, static documentation, and reactive defect detection. That version is already becoming less viable.

The version of QA that survives is the one that owns meaning, maintains accountability, assures governance, and ensures that in a world where AI participates in delivery, the humans in the loop are genuinely in the loop not just signing off on outputs they haven’t interrogated.

AI won’t replace QA.

But QA professionals who use AI will replace those who don’t and QA functions that evolve into quality intelligence will eventually outcompete those that don’t.

The question isn’t whether to adapt. It’s how fast.

This article draws on frameworks from AI-augmented requirements management, Agile-AI governance models, and emerging QA capability design for AI-enabled delivery teams. QA-AI